UPDATE – CrashPlan For Home (green branding) was retired by Code 42 Software on 22/08/2017. See migration notes below to find out how to transfer to CrashPlan for Small Business on Synology at the special discounted rate.

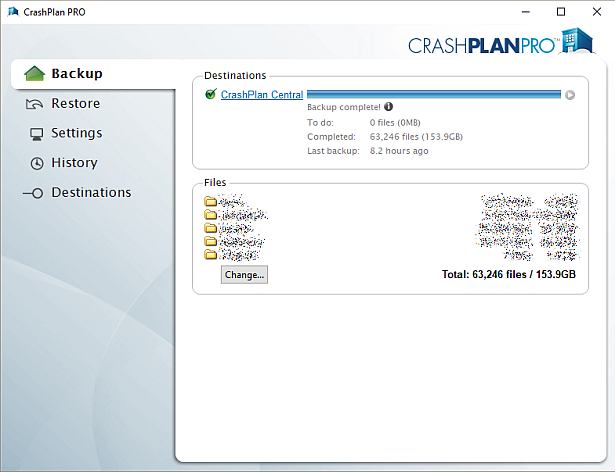

CrashPlan is a popular online backup solution which supports continuous syncing. With this your NAS can become even more resilient, particularly against the threat of ransomware.

There are now only two product versions:

- Small Business: CrashPlan PRO (blue branding). Unlimited cloud backup subscription, $10 per device per month. Reporting via Admin Console. No peer-to-peer backups

- Enterprise: CrashPlan PROe (black branding). Cloud backup subscription typically billed by storage usage, also available from third parties.

The instructions and notes on this page apply to both versions of the Synology package.

CrashPlan is a Java application which can be difficult to install on a NAS. Way back in January 2012 I decided to simplify it into a Synology package, since I had already created several others. It has been through many versions since that time, as the changelog below shows. Although it used to work on Synology products with ARM and PowerPC CPUs, it unfortunately became Intel-only in October 2016 due to Code 42 Software adding a reliance on some proprietary libraries.

Licence compliance is another challenge – Code 42’s EULA prohibits redistribution. I had to make the Synology package use the regular CrashPlan for Linux download (after the end user agrees to the Code 42 EULA). I then had to write my own script to extract this archive and mimic the Code 42 installer behaviour, but without the interactive prompts of the original.

Synology Package Installation

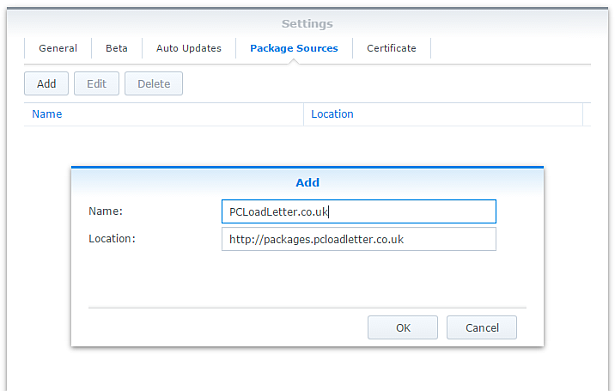

- In Synology DSM’s Package Center, click Settings and add my package repository:

- The repository will push its certificate automatically to the NAS, which is used to validate package integrity. Set the Trust Level to Synology Inc. and trusted publishers:

- Now browse the Community section in Package Center to install CrashPlan:

The repository only displays packages which are compatible with your specific model of NAS. If you don’t see CrashPlan in the list, then either your NAS model or your DSM version are not supported at this time. DSM 5.0 is the minimum supported version for this package, and an Intel CPU is required. - Since CrashPlan is a Java application, it needs a Java Runtime Environment (JRE) to function. It is recommended that you select to have the package install a dedicated Java 8 runtime. For licensing reasons I cannot include Java with this package, so you will need to agree to the licence terms and download it yourself from Oracle’s website. The package expects to find this .tar.gz file in a shared folder called ‘public’. If you go ahead and try to install the package without it, the error message will indicate precisely which Java file you need for your system type, and it will provide a TinyURL link to the appropriate Oracle download page.

- To install CrashPlan PRO you will first need to log into the Admin Console and download the Linux App from the App Download section and also place this in the ‘public’ shared folder on your NAS.

- If you have a multi-bay NAS, use the Shared Folder control panel to create the shared folder called public (it must be all lower case). On single bay models this is created by default. Assign it with Read/Write privileges for everyone.

- If you have trouble getting the Java or CrashPlan PRO app files recognised by this package, try downloading them with Firefox. It seems to be the only web browser that doesn’t try to uncompress the files, or rename them without warning. I also suggest that you leave the Java file and the public folder present once you have installed the package, so that you won’t need to fetch this again to install future updates to the CrashPlan package.

- CrashPlan is installed in headless mode – backup engine only. This will configured by a desktop client, but operates independently of it.

- The first time you start the CrashPlan package you will need to stop it and restart it before you can connect the client. This is because a config file that is only created on first run needs to be edited by one of my scripts. The engine is then configured to listen on all interfaces on the default port 4243.

CrashPlan Client Installation

- Once the CrashPlan engine is running on the NAS, you can manage it by installing CrashPlan on another computer, and by configuring it to connect to the NAS instance of the CrashPlan Engine.

- Make sure that you install the version of the CrashPlan client that matches the version running on the NAS. If the NAS version gets upgraded later, you will need to update your client computer too.

- The Linux CrashPlan PRO client must be downloaded from the Admin Console and placed in the ‘public’ folder on your NAS in order to successfully install the Synology package.

- By default the client is configured to connect to the CrashPlan engine running on the local computer. Run this command on your NAS from an SSH session:

echo `cat /var/lib/crashplan/.ui_info`

Note those are backticks not quotes. This will give you a port number (4243), followed by an authentication token, followed by the IP binding (0.0.0.0 means the server is listening for connections on all interfaces) e.g.:

4243,9ac9b642-ba26-4578-b705-124c6efc920b,0.0.0.0

port,--------------token-----------------,binding

Copy this token value and use this value to replace the token in the equivalent config file on the computer that you would like to run the CrashPlan client on – located here:

C:\ProgramData\CrashPlan\.ui_info (Windows)

“/Library/Application Support/CrashPlan/.ui_info” (Mac OS X installed for all users)

“~/Library/Application Support/CrashPlan/.ui_info” (Mac OS X installed for single user)

/var/lib/crashplan/.ui_info (Linux)

You will not be able to connect the client unless the client token matches on the NAS token. On the client you also need to amend the IP address value after the token to match the Synology NAS IP address.

so using the example above, your computer’s CrashPlan client config file would be edited to:

4243,9ac9b642-ba26-4578-b705-124c6efc920b,192.168.1.100

assuming that the Synology NAS has the IP 192.168.1.100

If it still won’t connect, check that the ServicePort value is set to 4243 in the following files:

C:\ProgramData\CrashPlan\conf\ui_(username).properties (Windows)

“/Library/Application Support/CrashPlan/ui.properties” (Mac OS X installed for all users)

“~/Library/Application Support/CrashPlan/ui.properties” (Mac OS X installed for single user)

/usr/local/crashplan/conf (Linux)

/var/lib/crashplan/.ui_info (Synology) – this value does change spontaneously if there’s a port conflict e.g. you started two versions of the package concurrently (CrashPlan and CrashPlan PRO) - As a result of the nightmarish complexity of recent product changes Code42 has now published a support article with more detail on running headless systems including config file locations on all supported operating systems, and for ‘all users’ versus single user installs etc.

- You should disable the CrashPlan service on your computer if you intend only to use the client. In Windows, open the Services section in Computer Management and stop the CrashPlan Backup Service. In the service Properties set the Startup Type to Manual. You can also disable the CrashPlan System Tray notification application by removing it from Task Manager > More Details > Start-up Tab (Windows 8/Windows 10) or the All Users Startup Start Menu folder (Windows 7).

To accomplish the same on Mac OS X, run the following commands one by one:sudo launchctl unload /Library/LaunchDaemons/com.crashplan.engine.plist sudo mv /Library/LaunchDaemons/com.crashplan.engine.plist /Library/LaunchDaemons/com.crashplan.engine.plist.bak

The CrashPlan menu bar application can be disabled in System Preferences > Users & Groups > Current User > Login Items

Migration from CrashPlan For Home to CrashPlan For Small Business (CrashPlan PRO)

- Leave the regular green branded CrashPlan 4.8.3 Synology package installed.

- Go through the online migration using the link in the email notification you received from Code 42 on 22/08/2017. This seems to trigger the CrashPlan client to begin an update to 4.9 which will fail. It will also migrate your account onto a CrashPlan PRO server. The web page is likely to stall on the Migrating step, but no matter. The process is meant to take you to the store but it seems to be quite flakey. If you see the store page with a $0.00 amount in the basket, this has correctly referred you for the introductory offer. Apparently the $9.99 price thereafter shown on that screen is a mistake and the correct price of $2.50 is shown on a later screen in the process I think. Enter your credit card details and check out if you can. If not, continue.

- Log into the CrashPlan PRO Admin Console as per these instructions, and download the CrashPlan PRO 4.9 client for Linux, and the 4.9 client for your remote console computer. Ignore the red message in the bottom left of the Admin Console about registering, and do not sign up for the free trial. Preferably use Firefox for the Linux version download – most of the other web browsers will try to unpack the .tgz archive, which you do not want to happen.

- Configure the CrashPlan PRO 4.9 client on your computer to connect to your Syno as per the usual instructions on this blog post.

- Put the downloaded Linux CrashPlan PRO 4.9 client .tgz file in the ‘public’ shared folder on your NAS. The package will no longer download this automatically as it did in previous versions.

- From the Community section of DSM Package Center, install the CrashPlan PRO 4.9 package concurrently with your existing CrashPlan 4.8.3 Syno package.

- This will stop the CrashPlan package and automatically import its configuration. Notice that it will also backup your old CrashPlan .identity file and leave it in the ‘public’ shared folder, just in case something goes wrong.

- Start the CrashPlan PRO Synology package, and connect your CrashPlan PRO console from your computer.

- You should see your protected folders as usual. At first mine reported something like “insufficient device licences”, but the next time I started up it changed to “subscription expired”.

- Uninstall the CrashPlan 4.8.3 Synology package, this is no longer required.

- At this point if the store referral didn’t work in the second step, you need to sign into the Admin Console. While signed in, navigate to this link which I was given by Code 42 support. If it works, you should see a store page with some blue font text and a $0.00 basket value. If it didn’t work you will get bounced to the Consumer Next Steps webpage: “Important Changes to CrashPlan for Home” – the one with the video of the CEO explaining the situation. I had to do this a few times before it worked. Once the store referral link worked and I had confirmed my payment details my CrashPlan PRO client immediately started working. Enjoy!

Notes

- The package uses the intact CrashPlan installer directly from Code 42 Software, following acceptance of its EULA. I am complying with the directive that no one redistributes it.

- The engine daemon script checks the amount of system RAM and scales the Java heap size appropriately (up to the default maximum of 512MB). This can be overridden in a persistent way if you are backing up large backup sets by editing /var/packages/CrashPlan/target/syno_package.vars. If you are considering buying a NAS purely to use CrashPlan and intend to back up more than a few hundred GB then I strongly advise buying one of the models with upgradeable RAM. Memory is very limited on the cheaper models. I have found that a 512MB heap was insufficient to back up more than 2TB of files on a Windows server and that was the situation many years ago. It kept restarting the backup engine every few minutes until I increased the heap to 1024MB. Many users of the package have found that they have to increase the heap size or CrashPlan will halt its activity. This can be mitigated by dividing your backup into several smaller backup sets which are scheduled to be protected at different times. Note that from package version 0041, using the dedicated JRE on a 64bit Intel NAS will allow a heap size greater than 4GB since the JRE is 64bit (requires DSM 6.0 in most cases).

- If you need to manage CrashPlan from a remote location, I suggest you do so using SSH tunnelling as per this support document.

- The package supports upgrading to future versions while preserving the machine identity, logs, login details, and cache. Upgrades can now take place without requiring a login from the client afterwards.

- If you remove the package completely and re-install it later, you can re-attach to previous backups. When you log in to the Desktop Client with your existing account after a re-install, you can select “adopt computer” to merge the records, and preserve your existing backups. I haven’t tested whether this also re-attaches links to friends’ CrashPlan computers and backup sets, though the latter does seem possible in the Friends section of the GUI. It’s probably a good idea to test that this survives a package reinstall before you start relying on it. Sometimes, particularly with CrashPlan PRO I think, the adopt option is not offered. In this case you can log into CrashPlan Central and retrieve your computer’s GUID. On the CrashPlan client, double-click on the logo in the top right and you’ll enter a command line mode. You can use the GUID command to change the system’s GUID to the one you just retrieved from your account.

- The log which is displayed in the package’s Log tab is actually the activity history. If you are trying to troubleshoot an issue you will need to use an SSH session to inspect these log files:

/var/packages/CrashPlan/target/log/engine_output.log

/var/packages/CrashPlan/target/log/engine_error.log

/var/packages/CrashPlan/target/log/app.log - When CrashPlan downloads and attempts to run an automatic update, the script will most likely fail and stop the package. This is typically caused by syntax differences with the Synology versions of certain Linux shell commands (like rm, mv, or ps). The startup script will attempt to apply the published upgrade the next time the package is started.

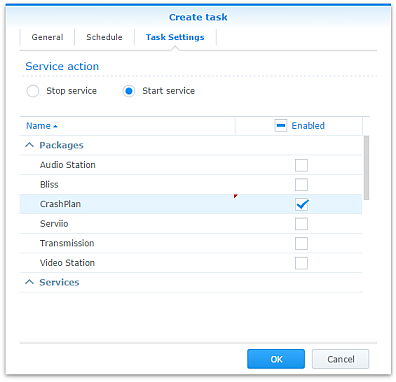

- Although CrashPlan’s activity can be scheduled within the application, in order to save RAM some users may wish to restrict running the CrashPlan engine to specific times of day using the Task Scheduler in DSM Control Panel:

Note that regardless of real-time backup, by default CrashPlan will scan the whole backup selection for changes at 3:00am. Include this time within your Task Scheduler time window or else CrashPlan will not capture file changes which occurred while it was inactive:

- If you decide to sign up for one of CrashPlan’s paid backup services as a result of my work on this, please consider donating using the PayPal button on the right of this page.

Package scripts

For information, here are the package scripts so you can see what it’s going to do. You can get more information about how packages work by reading the Synology 3rd Party Developer Guide.

installer.sh

#!/bin/sh

#--------CRASHPLAN installer script

#--------package maintained at pcloadletter.co.uk

DOWNLOAD_PATH="http://download2.code42.com/installs/linux/install/${SYNOPKG_PKGNAME}"

CP_EXTRACTED_FOLDER="crashplan-install"

OLD_JNA_NEEDED="false"

[ "${SYNOPKG_PKGNAME}" == "CrashPlan" ] && DOWNLOAD_FILE="CrashPlan_4.8.3_Linux.tgz"

[ "${SYNOPKG_PKGNAME}" == "CrashPlanPRO" ] && DOWNLOAD_FILE="CrashPlanPRO_4.*_Linux.tgz"

if [ "${SYNOPKG_PKGNAME}" == "CrashPlanPROe" ]; then

CP_EXTRACTED_FOLDER="${SYNOPKG_PKGNAME}-install"

OLD_JNA_NEEDED="true"

[ "${WIZARD_VER_483}" == "true" ] && { CPPROE_VER="4.8.3"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_480}" == "true" ] && { CPPROE_VER="4.8.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_470}" == "true" ] && { CPPROE_VER="4.7.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_460}" == "true" ] && { CPPROE_VER="4.6.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_452}" == "true" ] && { CPPROE_VER="4.5.2"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_450}" == "true" ] && { CPPROE_VER="4.5.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_441}" == "true" ] && { CPPROE_VER="4.4.1"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_430}" == "true" ] && CPPROE_VER="4.3.0"

[ "${WIZARD_VER_420}" == "true" ] && CPPROE_VER="4.2.0"

[ "${WIZARD_VER_370}" == "true" ] && CPPROE_VER="3.7.0"

[ "${WIZARD_VER_364}" == "true" ] && CPPROE_VER="3.6.4"

[ "${WIZARD_VER_363}" == "true" ] && CPPROE_VER="3.6.3"

[ "${WIZARD_VER_3614}" == "true" ] && CPPROE_VER="3.6.1.4"

[ "${WIZARD_VER_353}" == "true" ] && CPPROE_VER="3.5.3"

[ "${WIZARD_VER_341}" == "true" ] && CPPROE_VER="3.4.1"

[ "${WIZARD_VER_33}" == "true" ] && CPPROE_VER="3.3"

DOWNLOAD_FILE="CrashPlanPROe_${CPPROE_VER}_Linux.tgz"

fi

DOWNLOAD_URL="${DOWNLOAD_PATH}/${DOWNLOAD_FILE}"

CPI_FILE="${SYNOPKG_PKGNAME}_*.cpi"

OPTDIR="${SYNOPKG_PKGDEST}"

VARS_FILE="${OPTDIR}/install.vars"

SYNO_CPU_ARCH="`uname -m`"

[ "${SYNO_CPU_ARCH}" == "x86_64" ] && SYNO_CPU_ARCH="i686"

[ "${SYNO_CPU_ARCH}" == "armv5tel" ] && SYNO_CPU_ARCH="armel"

[ "${SYNOPKG_DSM_ARCH}" == "armada375" ] && SYNO_CPU_ARCH="armv7l"

[ "${SYNOPKG_DSM_ARCH}" == "armada38x" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "comcerto2k" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "alpine" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "alpine4k" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "monaco" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "rtd1296" ] && SYNO_CPU_ARCH="armhf"

NATIVE_BINS_URL="http://packages.pcloadletter.co.uk/downloads/crashplan-native-${SYNO_CPU_ARCH}.tar.xz"

NATIVE_BINS_FILE="`echo ${NATIVE_BINS_URL} | sed -r "s%^.*/(.*)%\1%"`"

OLD_JNA_URL="http://packages.pcloadletter.co.uk/downloads/crashplan-native-old-${SYNO_CPU_ARCH}.tar.xz"

OLD_JNA_FILE="`echo ${OLD_JNA_URL} | sed -r "s%^.*/(.*)%\1%"`"

INSTALL_FILES="${DOWNLOAD_URL} ${NATIVE_BINS_URL}"

[ "${OLD_JNA_NEEDED}" == "true" ] && INSTALL_FILES="${INSTALL_FILES} ${OLD_JNA_URL}"

TEMP_FOLDER="`find / -maxdepth 2 -path '/volume?/@tmp' | head -n 1`"

#the Manifest folder is where friends' backup data is stored

#we set it outside the app folder so it persists after a package uninstall

MANIFEST_FOLDER="/`echo $TEMP_FOLDER | cut -f2 -d'/'`/crashplan"

LOG_FILE="${SYNOPKG_PKGDEST}/log/history.log.0"

UPGRADE_FILES="syno_package.vars conf/my.service.xml conf/service.login conf/service.model"

UPGRADE_FOLDERS="log cache"

PUBLIC_FOLDER="`synoshare --get public | sed -r "/Path/!d;s/^.*\[(.*)\].*$/\1/"`"

#dedicated JRE section

if [ "${WIZARD_JRE_CP}" == "true" ]; then

DOWNLOAD_URL="http://tinyurl.com/javaembed"

EXTRACTED_FOLDER="ejdk1.8.0_151"

#detect systems capable of running 64bit JRE which can address more than 4GB of RAM

[ "${SYNOPKG_DSM_ARCH}" == "x64" ] && SYNO_CPU_ARCH="x64"

[ "`uname -m`" == "x86_64" ] && [ ${SYNOPKG_DSM_VERSION_MAJOR} -ge 6 ] && SYNO_CPU_ARCH="x64"

if [ "${SYNO_CPU_ARCH}" == "armel" ]; then

JAVA_BINARY="ejdk-8u151-linux-arm-sflt.tar.gz"

JAVA_BUILD="ARMv5/ARMv6/ARMv7 Linux - SoftFP ABI, Little Endian 2"

elif [ "${SYNO_CPU_ARCH}" == "armv7l" ]; then

JAVA_BINARY="ejdk-8u151-linux-arm-sflt.tar.gz"

JAVA_BUILD="ARMv5/ARMv6/ARMv7 Linux - SoftFP ABI, Little Endian 2"

elif [ "${SYNO_CPU_ARCH}" == "armhf" ]; then

JAVA_BINARY="ejdk-8u151-linux-armv6-vfp-hflt.tar.gz"

JAVA_BUILD="ARMv6/ARMv7 Linux - VFP, HardFP ABI, Little Endian 1"

elif [ "${SYNO_CPU_ARCH}" == "ppc" ]; then

#Oracle have discontinued Java 8 for PowerPC after update 6

JAVA_BINARY="ejdk-8u6-fcs-b23-linux-ppc-e500v2-12_jun_2014.tar.gz"

JAVA_BUILD="Power Architecture Linux - Headless - e500v2 with double-precision SPE Floating Point Unit"

EXTRACTED_FOLDER="ejdk1.8.0_06"

DOWNLOAD_URL="http://tinyurl.com/java8ppc"

elif [ "${SYNO_CPU_ARCH}" == "i686" ]; then

JAVA_BINARY="ejdk-8u151-linux-i586.tar.gz"

JAVA_BUILD="x86 Linux Small Footprint - Headless"

elif [ "${SYNO_CPU_ARCH}" == "x64" ]; then

JAVA_BINARY="jre-8u151-linux-x64.tar.gz"

JAVA_BUILD="Linux x64"

EXTRACTED_FOLDER="jre1.8.0_151"

DOWNLOAD_URL="http://tinyurl.com/java8x64"

fi

fi

JAVA_BINARY=`echo ${JAVA_BINARY} | cut -f1 -d'.'`

source /etc/profile

pre_checks ()

{

#These checks are called from preinst and from preupgrade functions to prevent failures resulting in a partially upgraded package

if [ "${WIZARD_JRE_CP}" == "true" ]; then

synoshare -get public > /dev/null || (

echo "A shared folder called 'public' could not be found - note this name is case-sensitive. " >> $SYNOPKG_TEMP_LOGFILE

echo "Please create this using the Shared Folder DSM Control Panel and try again." >> $SYNOPKG_TEMP_LOGFILE

exit 1

)

JAVA_BINARY_FOUND=

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.gz ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.tar ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.gz ] && JAVA_BINARY_FOUND=true

if [ -z ${JAVA_BINARY_FOUND} ]; then

echo "Java binary bundle not found. " >> $SYNOPKG_TEMP_LOGFILE

echo "I was expecting the file ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.gz. " >> $SYNOPKG_TEMP_LOGFILE

echo "Please agree to the Oracle licence at ${DOWNLOAD_URL}, then download the '${JAVA_BUILD}' package" >> $SYNOPKG_TEMP_LOGFILE

echo "and place it in the 'public' shared folder on your NAS. This download cannot be automated even if " >> $SYNOPKG_TEMP_LOGFILE

echo "displaying a package EULA could potentially cover the legal aspect, because files hosted on Oracle's " >> $SYNOPKG_TEMP_LOGFILE

echo "server are protected by a session cookie requiring a JavaScript enabled browser." >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

else

if [ -z ${JAVA_HOME} ]; then

echo "Java is not installed or not properly configured. JAVA_HOME is not defined. " >> $SYNOPKG_TEMP_LOGFILE

echo "Download and install the Java Synology package from http://wp.me/pVshC-z5" >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

if [ ! -f ${JAVA_HOME}/bin/java ]; then

echo "Java is not installed or not properly configured. The Java binary could not be located. " >> $SYNOPKG_TEMP_LOGFILE

echo "Download and install the Java Synology package from http://wp.me/pVshC-z5" >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

if [ "${WIZARD_JRE_SYS}" == "true" ]; then

JAVA_VER=`java -version 2>&1 | sed -r "/^.* version/!d;s/^.* version \"[0-9]\.([0-9]).*$/\1/"`

if [ ${JAVA_VER} -lt 8 ]; then

echo "This version of CrashPlan requires Java 8 or newer. Please update your Java package. "

exit 1

fi

fi

fi

}

preinst ()

{

pre_checks

cd ${TEMP_FOLDER}

for WGET_URL in ${INSTALL_FILES}

do

WGET_FILENAME="`echo ${WGET_URL} | sed -r "s%^.*/(.*)%\1%"`"

[ -f ${TEMP_FOLDER}/${WGET_FILENAME} ] && rm ${TEMP_FOLDER}/${WGET_FILENAME}

wget ${WGET_URL}

if [[ $? != 0 ]]; then

if [ -d ${PUBLIC_FOLDER} ] && [ -f ${PUBLIC_FOLDER}/${WGET_FILENAME} ]; then

cp ${PUBLIC_FOLDER}/${WGET_FILENAME} ${TEMP_FOLDER}

else

echo "There was a problem downloading ${WGET_FILENAME} from the official download link, " >> $SYNOPKG_TEMP_LOGFILE

echo "which was \"${WGET_URL}\" " >> $SYNOPKG_TEMP_LOGFILE

echo "Alternatively, you may download this file manually and place it in the 'public' shared folder. " >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

fi

done

exit 0

}

postinst ()

{

if [ "${WIZARD_JRE_CP}" == "true" ]; then

#extract Java (Web browsers love to interfere with .tar.gz files)

cd ${PUBLIC_FOLDER}

if [ -f ${JAVA_BINARY}.tar.gz ]; then

#Firefox seems to be the only browser that leaves it alone

tar xzf ${JAVA_BINARY}.tar.gz

elif [ -f ${JAVA_BINARY}.gz ]; then

#Chrome

tar xzf ${JAVA_BINARY}.gz

elif [ -f ${JAVA_BINARY}.tar ]; then

#Safari

tar xf ${JAVA_BINARY}.tar

elif [ -f ${JAVA_BINARY}.tar.tar ]; then

#Internet Explorer

tar xzf ${JAVA_BINARY}.tar.tar

fi

mv ${EXTRACTED_FOLDER} ${SYNOPKG_PKGDEST}/jre-syno

JRE_PATH="`find ${OPTDIR}/jre-syno/ -name jre`"

[ -z ${JRE_PATH} ] && JRE_PATH=${OPTDIR}/jre-syno

#change owner of folder tree

chown -R root:root ${SYNOPKG_PKGDEST}

fi

#extract CPU-specific additional binaries

mkdir ${SYNOPKG_PKGDEST}/bin

cd ${SYNOPKG_PKGDEST}/bin

tar xJf ${TEMP_FOLDER}/${NATIVE_BINS_FILE} && rm ${TEMP_FOLDER}/${NATIVE_BINS_FILE}

[ "${OLD_JNA_NEEDED}" == "true" ] && tar xJf ${TEMP_FOLDER}/${OLD_JNA_FILE} && rm ${TEMP_FOLDER}/${OLD_JNA_FILE}

#extract main archive

cd ${TEMP_FOLDER}

tar xzf ${TEMP_FOLDER}/${DOWNLOAD_FILE} && rm ${TEMP_FOLDER}/${DOWNLOAD_FILE}

#extract cpio archive

cd ${SYNOPKG_PKGDEST}

cat "${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}"/${CPI_FILE} | gzip -d -c - | ${SYNOPKG_PKGDEST}/bin/cpio -i --no-preserve-owner

echo "#uncomment to expand Java max heap size beyond prescribed value (will survive upgrades)" > ${SYNOPKG_PKGDEST}/syno_package.vars

echo "#you probably only want more than the recommended 1024M if you're backing up extremely large volumes of files" >> ${SYNOPKG_PKGDEST}/syno_package.vars

echo "#USR_MAX_HEAP=1024M" >> ${SYNOPKG_PKGDEST}/syno_package.vars

echo >> ${SYNOPKG_PKGDEST}/syno_package.vars

cp ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/scripts/CrashPlanEngine ${OPTDIR}/bin

cp ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/scripts/run.conf ${OPTDIR}/bin

mkdir -p ${MANIFEST_FOLDER}/backupArchives

#save install variables which Crashplan expects its own installer script to create

echo TARGETDIR=${SYNOPKG_PKGDEST} > ${VARS_FILE}

echo BINSDIR=/bin >> ${VARS_FILE}

echo MANIFESTDIR=${MANIFEST_FOLDER}/backupArchives >> ${VARS_FILE}

#leave these ones out which should help upgrades from Code42 to work (based on examining an upgrade script)

#echo INITDIR=/etc/init.d >> ${VARS_FILE}

#echo RUNLVLDIR=/usr/syno/etc/rc.d >> ${VARS_FILE}

echo INSTALLDATE=`date +%Y%m%d` >> ${VARS_FILE}

[ "${WIZARD_JRE_CP}" == "true" ] && echo JAVACOMMON=${JRE_PATH}/bin/java >> ${VARS_FILE}

[ "${WIZARD_JRE_SYS}" == "true" ] && echo JAVACOMMON=\${JAVA_HOME}/bin/java >> ${VARS_FILE}

cat ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/install.defaults >> ${VARS_FILE}

#remove temp files

rm -r ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}

#add firewall config

/usr/syno/bin/servicetool --install-configure-file --package /var/packages/${SYNOPKG_PKGNAME}/scripts/${SYNOPKG_PKGNAME}.sc > /dev/null

#amend CrashPlanPROe client version

[ "${SYNOPKG_PKGNAME}" == "CrashPlanPROe" ] && sed -i -r "s/^version=\".*(-.*$)/version=\"${CPPROE_VER}\1/" /var/packages/${SYNOPKG_PKGNAME}/INFO

#are we transitioning an existing CrashPlan account to CrashPlan For Small Business?

if [ "${SYNOPKG_PKGNAME}" == "CrashPlanPRO" ]; then

if [ -e /var/packages/CrashPlan/scripts/start-stop-status ]; then

/var/packages/CrashPlan/scripts/start-stop-status stop

cp /var/lib/crashplan/.identity ${PUBLIC_FOLDER}/crashplan-identity.bak

cp -R /var/packages/CrashPlan/target/conf/ ${OPTDIR}/

fi

fi

exit 0

}

preuninst ()

{

`dirname $0`/stop-start-status stop

exit 0

}

postuninst ()

{

if [ -f ${SYNOPKG_PKGDEST}/syno_package.vars ]; then

source ${SYNOPKG_PKGDEST}/syno_package.vars

fi

[ -e ${OPTDIR}/lib/libffi.so.5 ] && rm ${OPTDIR}/lib/libffi.so.5

#delete symlink if it no longer resolves - PowerPC only

if [ ! -e /lib/libffi.so.5 ]; then

[ -L /lib/libffi.so.5 ] && rm /lib/libffi.so.5

fi

#remove firewall config

if [ "${SYNOPKG_PKG_STATUS}" == "UNINSTALL" ]; then

/usr/syno/bin/servicetool --remove-configure-file --package ${SYNOPKG_PKGNAME}.sc > /dev/null

fi

exit 0

}

preupgrade ()

{

`dirname $0`/stop-start-status stop

pre_checks

#if identity exists back up config

if [ -f /var/lib/crashplan/.identity ]; then

mkdir -p ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf

for FILE_TO_MIGRATE in ${UPGRADE_FILES}; do

if [ -f ${OPTDIR}/${FILE_TO_MIGRATE} ]; then

cp ${OPTDIR}/${FILE_TO_MIGRATE} ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE}

fi

done

for FOLDER_TO_MIGRATE in ${UPGRADE_FOLDERS}; do

if [ -d ${OPTDIR}/${FOLDER_TO_MIGRATE} ]; then

mv ${OPTDIR}/${FOLDER_TO_MIGRATE} ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig

fi

done

fi

exit 0

}

postupgrade ()

{

#use the migrated identity and config data from the previous version

if [ -f ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf/my.service.xml ]; then

for FILE_TO_MIGRATE in ${UPGRADE_FILES}; do

if [ -f ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE} ]; then

mv ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE} ${OPTDIR}/${FILE_TO_MIGRATE}

fi

done

for FOLDER_TO_MIGRATE in ${UPGRADE_FOLDERS}; do

if [ -d ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FOLDER_TO_MIGRATE} ]; then

mv ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FOLDER_TO_MIGRATE} ${OPTDIR}

fi

done

rmdir ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf

rmdir ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig

#make CrashPlan log entry

TIMESTAMP="`date "+%D %I:%M%p"`"

echo "I ${TIMESTAMP} Synology Package Center updated ${SYNOPKG_PKGNAME} to version ${SYNOPKG_PKGVER}" >> ${LOG_FILE}

fi

exit 0

}

start-stop-status.sh

#!/bin/sh

#--------CRASHPLAN start-stop-status script

#--------package maintained at pcloadletter.co.uk

TEMP_FOLDER="`find / -maxdepth 2 -path '/volume?/@tmp' | head -n 1`"

MANIFEST_FOLDER="/`echo $TEMP_FOLDER | cut -f2 -d'/'`/crashplan"

ENGINE_CFG="run.conf"

PKG_FOLDER="`dirname $0 | cut -f1-4 -d'/'`"

DNAME="`dirname $0 | cut -f4 -d'/'`"

OPTDIR="${PKG_FOLDER}/target"

PID_FILE="${OPTDIR}/${DNAME}.pid"

DLOG="${OPTDIR}/log/history.log.0"

CFG_PARAM="SRV_JAVA_OPTS"

JAVA_MIN_HEAP=`grep "^${CFG_PARAM}=" "${OPTDIR}/bin/${ENGINE_CFG}" | sed -r "s/^.*-Xms([0-9]+)[Mm] .*$/\1/"`

SYNO_CPU_ARCH="`uname -m`"

TIMESTAMP="`date "+%D %I:%M%p"`"

FULL_CP="${OPTDIR}/lib/com.backup42.desktop.jar:${OPTDIR}/lang"

source ${OPTDIR}/install.vars

source /etc/profile

source /root/.profile

start_daemon ()

{

#check persistent variables from syno_package.vars

USR_MAX_HEAP=0

if [ -f ${OPTDIR}/syno_package.vars ]; then

source ${OPTDIR}/syno_package.vars

fi

USR_MAX_HEAP=`echo $USR_MAX_HEAP | sed -e "s/[mM]//"`

#do we need to restore the identity file - has a DSM upgrade scrubbed /var/lib/crashplan?

if [ ! -e /var/lib/crashplan ]; then

mkdir /var/lib/crashplan

[ -e ${OPTDIR}/conf/var-backup/.identity ] && cp ${OPTDIR}/conf/var-backup/.identity /var/lib/crashplan/

fi

#fix up some of the binary paths and fix some command syntax for busybox

#moved this to start-stop-status.sh from installer.sh because Code42 push updates and these

#new scripts will need this treatment too

find ${OPTDIR}/ -name "*.sh" | while IFS="" read -r FILE_TO_EDIT; do

if [ -e ${FILE_TO_EDIT} ]; then

#this list of substitutions will probably need expanding as new CrashPlan updates are released

sed -i "s%^#!/bin/bash%#!$/bin/sh%" "${FILE_TO_EDIT}"

sed -i -r "s%(^\s*)(/bin/cpio |cpio ) %\1/${OPTDIR}/bin/cpio %" "${FILE_TO_EDIT}"

sed -i -r "s%(^\s*)(/bin/ps|ps) [^w][^\|]*\|%\1/bin/ps w \|%" "${FILE_TO_EDIT}"

sed -i -r "s%\`ps [^w][^\|]*\|%\`ps w \|%" "${FILE_TO_EDIT}"

sed -i -r "s%^ps [^w][^\|]*\|%ps w \|%" "${FILE_TO_EDIT}"

sed -i "s/rm -fv/rm -f/" "${FILE_TO_EDIT}"

sed -i "s/mv -fv/mv -f/" "${FILE_TO_EDIT}"

fi

done

#use this daemon init script rather than the unreliable Code42 stock one which greps the ps output

sed -i "s%^ENGINE_SCRIPT=.*$%ENGINE_SCRIPT=$0%" ${OPTDIR}/bin/restartLinux.sh

#any downloaded upgrade script will usually have failed despite the above changes

#so ignore the script and explicitly extract the new java code using the chrisnelson.ca method

#thanks to Jeff Bingham for tweaks

UPGRADE_JAR=`find ${OPTDIR}/upgrade -maxdepth 1 -name "*.jar" | tail -1`

if [ -n "${UPGRADE_JAR}" ]; then

rm ${OPTDIR}/*.pid > /dev/null

#make CrashPlan log entry

echo "I ${TIMESTAMP} Synology extracting upgrade from ${UPGRADE_JAR}" >> ${DLOG}

UPGRADE_VER=`echo ${SCRIPT_HOME} | sed -r "s/^.*\/([0-9_]+)\.[0-9]+/\1/"`

#DSM 6.0 no longer includes unzip, use 7z instead

unzip -o ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "*.jar" -d ${OPTDIR}/lib/ || 7z e -y ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "*.jar" -o${OPTDIR}/lib/ > /dev/null

unzip -o ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "lang/*" -d ${OPTDIR} || 7z e -y ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "lang/*" -o${OPTDIR} > /dev/null

mv ${UPGRADE_JAR} ${TEMP_FOLDER}/ > /dev/null

exec $0

fi

#updates may also overwrite our native binaries

[ -e ${OPTDIR}/bin/libffi.so.5 ] && cp -f ${OPTDIR}/bin/libffi.so.5 ${OPTDIR}/lib/

[ -e ${OPTDIR}/bin/libjtux.so ] && cp -f ${OPTDIR}/bin/libjtux.so ${OPTDIR}/

[ -e ${OPTDIR}/bin/jna-3.2.5.jar ] && cp -f ${OPTDIR}/bin/jna-3.2.5.jar ${OPTDIR}/lib/

if [ -e ${OPTDIR}/bin/jna.jar ] && [ -e ${OPTDIR}/lib/jna.jar ]; then

cp -f ${OPTDIR}/bin/jna.jar ${OPTDIR}/lib/

fi

#create or repair libffi.so.5 symlink if a DSM upgrade has removed it - PowerPC only

if [ -e ${OPTDIR}/lib/libffi.so.5 ]; then

if [ ! -e /lib/libffi.so.5 ]; then

#if it doesn't exist, but is still a link then it's a broken link and should be deleted first

[ -L /lib/libffi.so.5 ] && rm /lib/libffi.so.5

ln -s ${OPTDIR}/lib/libffi.so.5 /lib/libffi.so.5

fi

fi

#set appropriate Java max heap size

RAM=$((`free | grep Mem: | sed -e "s/^ *Mem: *\([0-9]*\).*$/\1/"`/1024))

if [ $RAM -le 128 ]; then

JAVA_MAX_HEAP=80

elif [ $RAM -le 256 ]; then

JAVA_MAX_HEAP=192

elif [ $RAM -le 512 ]; then

JAVA_MAX_HEAP=384

elif [ $RAM -le 1024 ]; then

JAVA_MAX_HEAP=512

elif [ $RAM -gt 1024 ]; then

JAVA_MAX_HEAP=1024

fi

if [ $USR_MAX_HEAP -gt $JAVA_MAX_HEAP ]; then

JAVA_MAX_HEAP=${USR_MAX_HEAP}

fi

if [ $JAVA_MAX_HEAP -lt $JAVA_MIN_HEAP ]; then

#can't have a max heap lower than min heap (ARM low RAM systems)

$JAVA_MAX_HEAP=$JAVA_MIN_HEAP

fi

sed -i -r "s/(^${CFG_PARAM}=.*) -Xmx[0-9]+[mM] (.*$)/\1 -Xmx${JAVA_MAX_HEAP}m \2/" "${OPTDIR}/bin/${ENGINE_CFG}"

#disable the use of the x86-optimized external Fast MD5 library if running on ARM and PPC CPUs

#seems to be the default behaviour now but that may change again

[ "${SYNO_CPU_ARCH}" == "x86_64" ] && SYNO_CPU_ARCH="i686"

if [ "${SYNO_CPU_ARCH}" != "i686" ]; then

grep "^${CFG_PARAM}=.*c42\.native\.md5\.enabled" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s/(^${CFG_PARAM}=\".*)\"$/\1 -Dc42.native.md5.enabled=false\"/" "${OPTDIR}/bin/${ENGINE_CFG}"

fi

#move the Java temp directory from the default of /tmp

grep "^${CFG_PARAM}=.*Djava\.io\.tmpdir" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s%(^${CFG_PARAM}=\".*)\"$%\1 -Djava.io.tmpdir=${TEMP_FOLDER}\"%" "${OPTDIR}/bin/${ENGINE_CFG}"

#now edit the XML config file, which only exists after first run

if [ -f ${OPTDIR}/conf/my.service.xml ]; then

#allow direct connections from CrashPlan Desktop client on remote systems

#you must edit the value of serviceHost in conf/ui.properties on the client you connect with

#users report that this value is sometimes reset so now it's set every service startup

sed -i "s/<serviceHost>127\.0\.0\.1<\/serviceHost>/<serviceHost>0\.0\.0\.0<\/serviceHost>/" "${OPTDIR}/conf/my.service.xml"

#default changed in CrashPlan 4.3

sed -i "s/<serviceHost>localhost<\/serviceHost>/<serviceHost>0\.0\.0\.0<\/serviceHost>/" "${OPTDIR}/conf/my.service.xml"

#since CrashPlan 4.4 another config file to allow remote console connections

sed -i "s/127\.0\.0\.1/0\.0\.0\.0/" /var/lib/crashplan/.ui_info

#this change is made only once in case you want to customize the friends' backup location

if [ "${MANIFEST_PATH_SET}" != "True" ]; then

#keep friends' backup data outside the application folder to make accidental deletion less likely

sed -i "s%<manifestPath>.*</manifestPath>%<manifestPath>${MANIFEST_FOLDER}/backupArchives/</manifestPath>%" "${OPTDIR}/conf/my.service.xml"

echo "MANIFEST_PATH_SET=True" >> ${OPTDIR}/syno_package.vars

fi

#since CrashPlan version 3.5.3 the value javaMemoryHeapMax also needs setting to match that used in bin/run.conf

sed -i -r "s%(<javaMemoryHeapMax>)[0-9]+[mM](</javaMemoryHeapMax>)%\1${JAVA_MAX_HEAP}m\2%" "${OPTDIR}/conf/my.service.xml"

#make sure CrashPlan is not binding to the IPv6 stack

grep "\-Djava\.net\.preferIPv4Stack=true" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s/(^${CFG_PARAM}=\".*)\"$/\1 -Djava.net.preferIPv4Stack=true\"/" "${OPTDIR}/bin/${ENGINE_CFG}"

else

echo "Check the package log to ensure the package has started successfully, then stop and restart the package to allow desktop client connections." > "${SYNOPKG_TEMP_LOGFILE}"

fi

#increase the system-wide maximum number of open files from Synology default of 24466

[ `cat /proc/sys/fs/file-max` -lt 65536 ] && echo "65536" > /proc/sys/fs/file-max

#raise the maximum open file count from the Synology default of 1024 - thanks Casper K. for figuring this out

#http://support.code42.com/Administrator/3.6_And_4.0/Troubleshooting/Too_Many_Open_Files

ulimit -n 65536

#ensure that Code 42 have not amended install.vars to force the use of their own (Intel) JRE

if [ -e ${OPTDIR}/jre-syno ]; then

JRE_PATH="`find ${OPTDIR}/jre-syno/ -name jre`"

[ -z ${JRE_PATH} ] && JRE_PATH=${OPTDIR}/jre-syno

sed -i -r "s|^(JAVACOMMON=).*$|\1\${JRE_PATH}/bin/java|" ${OPTDIR}/install.vars

#if missing, set timezone and locale for dedicated JRE

if [ -z ${TZ} ]; then

SYNO_TZ=`cat /etc/synoinfo.conf | grep timezone | cut -f2 -d'"'`

#fix for DST time in DSM 5.2 thanks to MinimServer Syno package author

[ -e /usr/share/zoneinfo/Timezone/synotztable.json ] \

&& SYNO_TZ=`jq ".${SYNO_TZ} | .nameInTZDB" /usr/share/zoneinfo/Timezone/synotztable.json | sed -e "s/\"//g"` \

|| SYNO_TZ=`grep "^${SYNO_TZ}" /usr/share/zoneinfo/Timezone/tzname | sed -e "s/^.*= //"`

export TZ=${SYNO_TZ}

fi

[ -z ${LANG} ] && export LANG=en_US.utf8

export CLASSPATH=.:${OPTDIR}/jre-syno/lib

else

sed -i -r "s|^(JAVACOMMON=).*$|\1\${JAVA_HOME}/bin/java|" ${OPTDIR}/install.vars

fi

source ${OPTDIR}/bin/run.conf

source ${OPTDIR}/install.vars

cd ${OPTDIR}

$JAVACOMMON $SRV_JAVA_OPTS -classpath $FULL_CP com.backup42.service.CPService > ${OPTDIR}/log/engine_output.log 2> ${OPTDIR}/log/engine_error.log &

if [ $! -gt 0 ]; then

echo $! > $PID_FILE

renice 19 $! > /dev/null

if [ -z "${SYNOPKG_PKGDEST}" ]; then

#script was manually invoked, need this to show status change in Package Center

[ -e ${PKG_FOLDER}/enabled ] || touch ${PKG_FOLDER}/enabled

fi

else

echo "${DNAME} failed to start, check ${OPTDIR}/log/engine_error.log" > "${SYNOPKG_TEMP_LOGFILE}"

echo "${DNAME} failed to start, check ${OPTDIR}/log/engine_error.log" >&2

exit 1

fi

}

stop_daemon ()

{

echo "I ${TIMESTAMP} Stopping ${DNAME}" >> ${DLOG}

kill `cat ${PID_FILE}`

wait_for_status 1 20 || kill -9 `cat ${PID_FILE}`

rm -f ${PID_FILE}

if [ -z ${SYNOPKG_PKGDEST} ]; then

#script was manually invoked, need this to show status change in Package Center

[ -e ${PKG_FOLDER}/enabled ] && rm ${PKG_FOLDER}/enabled

fi

#backup identity file in case DSM upgrade removes it

[ -e ${OPTDIR}/conf/var-backup ] || mkdir ${OPTDIR}/conf/var-backup

cp /var/lib/crashplan/.identity ${OPTDIR}/conf/var-backup/

}

daemon_status ()

{

if [ -f ${PID_FILE} ] && kill -0 `cat ${PID_FILE}` > /dev/null 2>&1; then

return

fi

rm -f ${PID_FILE}

return 1

}

wait_for_status ()

{

counter=$2

while [ ${counter} -gt 0 ]; do

daemon_status

[ $? -eq $1 ] && return

let counter=counter-1

sleep 1

done

return 1

}

case $1 in

start)

if daemon_status; then

echo ${DNAME} is already running with PID `cat ${PID_FILE}`

exit 0

else

echo Starting ${DNAME} ...

start_daemon

exit $?

fi

;;

stop)

if daemon_status; then

echo Stopping ${DNAME} ...

stop_daemon

exit $?

else

echo ${DNAME} is not running

exit 0

fi

;;

restart)

stop_daemon

start_daemon

exit $?

;;

status)

if daemon_status; then

echo ${DNAME} is running with PID `cat ${PID_FILE}`

exit 0

else

echo ${DNAME} is not running

exit 1

fi

;;

log)

echo "${DLOG}"

exit 0

;;

*)

echo "Usage: $0 {start|stop|status|restart}" >&2

exit 1

;;

esac

install_uifile & upgrade_uifile

[

{

"step_title": "Client Version Selection",

"items": [

{

"type": "singleselect",

"desc": "Please select the CrashPlanPROe client version that is appropriate for your backup destination server:",

"subitems": [

{

"key": "WIZARD_VER_483",

"desc": "4.8.3",

"defaultValue": true

}, {

"key": "WIZARD_VER_480",

"desc": "4.8.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_470",

"desc": "4.7.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_460",

"desc": "4.6.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_452",

"desc": "4.5.2",

"defaultValue": false

},

{

"key": "WIZARD_VER_450",

"desc": "4.5.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_441",

"desc": "4.4.1",

"defaultValue": false

},

{

"key": "WIZARD_VER_430",

"desc": "4.3.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_420",

"desc": "4.2.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_370",

"desc": "3.7.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_364",

"desc": "3.6.4",

"defaultValue": false

},

{

"key": "WIZARD_VER_363",

"desc": "3.6.3",

"defaultValue": false

},

{

"key": "WIZARD_VER_3614",

"desc": "3.6.1.4",

"defaultValue": false

},

{

"key": "WIZARD_VER_353",

"desc": "3.5.3",

"defaultValue": false

},

{

"key": "WIZARD_VER_341",

"desc": "3.4.1",

"defaultValue": false

},

{

"key": "WIZARD_VER_33",

"desc": "3.3",

"defaultValue": false

}

]

}

]

},

{

"step_title": "Java Runtime Environment Selection",

"items": [

{

"type": "singleselect",

"desc": "Please select the Java version which you would like CrashPlan to use:",

"subitems": [

{

"key": "WIZARD_JRE_SYS",

"desc": "Default system Java version",

"defaultValue": false

},

{

"key": "WIZARD_JRE_CP",

"desc": "Dedicated installation of Java 8",

"defaultValue": true

}

]

}

]

}

]

Changelog:

- 0047 30/Oct/17 – Updated dedicated Java version to 8 update 151, added support for additional Intel CPUs in x18 Synology products.

- 0046 26/Aug/17 – Updated to CrashPlan PRO 4.9, added support for migration from CrashPlan For Home to CrashPlan For Small Business (CrashPlan PRO). Please read the Migration section on this page for instructions.

- 0045 02/Aug/17 – Updated to CrashPlan 4.8.3, updated dedicated Java version to 8 update 144

- 0044 21/Jan/17 – Updated dedicated Java version to 8 update 121

- 0043 07/Jan/17 – Updated dedicated Java version to 8 update 111, added support for Intel Broadwell and Grantley CPUs

- 0042 03/Oct/16 – Updated to CrashPlan 4.8.0, Java 8 is now required, added optional dedicated Java 8 Runtime instead of the default system one including 64bit Java support on 64 bit Intel CPUs to permit memory allocation larger than 4GB. Support for non-Intel platforms withdrawn owing to Code42’s reliance on proprietary native code library libc42archive.so

- 0041 20/Jul/16 – Improved auto-upgrade compatibility (hopefully), added option to have CrashPlan use a dedicated Java 7 Runtime instead of the default system one, including 64bit Java support on 64 bit Intel CPUs to permit memory allocation larger than 4GB

- 0040 25/May/16 – Added cpio to the path in the running context of start-stop-status.sh

- 0039 25/May/16 – Updated to CrashPlan 4.7.0, at each launch forced the use of the system JRE over the CrashPlan bundled Intel one, added Maven build of JNA 4.1.0 for ARMv7 systems consistent with the version bundled with CrashPlan

- 0038 27/Apr/16 – Updated to CrashPlan 4.6.0, and improved support for Code 42 pushed updates

- 0037 21/Jan/16 – Updated to CrashPlan 4.5.2

- 0036 14/Dec/15 – Updated to CrashPlan 4.5.0, separate firewall definitions for management client and for friends backup, added support for DS716+ and DS216play

- 0035 06/Nov/15 – Fixed the update to 4.4.1_59, new installs now listen for remote connections after second startup (was broken from 4.4), updated client install documentation with more file locations and added a link to a new Code42 support doc

EITHER completely remove and reinstall the package (which will require a rescan of the entire backup set) OR alternatively please delete all except for one of the failed upgrade numbered subfolders in /var/packages/CrashPlan/target/upgrade before upgrading. There will be one folder for each time CrashPlan tried and failed to start since Code42 pushed the update - 0034 04/Oct/15 – Updated to CrashPlan 4.4.1, bundled newer JNA native libraries to match those from Code42, PLEASE READ UPDATED BLOG POST INSTRUCTIONS FOR CLIENT INSTALL this version introduced yet another requirement for the client

- 0033 12/Aug/15 – Fixed version 0032 client connection issue for fresh installs

- 0032 12/Jul/15 – Updated to CrashPlan 4.3, PLEASE READ UPDATED BLOG POST INSTRUCTIONS FOR CLIENT INSTALL this version introduced an extra requirement, changed update repair to use the chrisnelson.ca method, forced CrashPlan to prefer IPv4 over IPv6 bindings, removed some legacy version migration scripting, updated main blog post documentation

- 0031 20/May/15 – Updated to CrashPlan 4.2, cross compiled a newer cpio binary for some architectures which were segfaulting while unpacking main CrashPlan archive, added port 4242 to the firewall definition (friend backups), package is now signed with repository private key

- 0030 16/Feb/15 – Fixed show-stopping issue with version 0029 for systems with more than one volume

- 0029 21/Jan/15 – Updated to CrashPlan version 3.7.0, improved detection of temp folder (prevent use of /var/@tmp), added support for Annapurna Alpine AL514 CPU (armhf) in DS2015xs, added support for Marvell Armada 375 CPU (armhf) in DS215j, abandoned practical efforts to try to support Code42’s upgrade scripts, abandoned inotify support (realtime backup) on PowerPC after many failed attempts with self-built and pre-built jtux and jna libraries, back-merged older libffi support for old PowerPC binaries after it was removed in 0028 re-write

- 0028 22/Oct/14 – Substantial re-write:

Updated to CrashPlan version 3.6.4

DSM 5.0 or newer is now required

libjnidispatch.so taken from Debian JNA 3.2.7 package with dependency on newer libffi.so.6 (included in DSM 5.0)

jna-3.2.5.jar emptied of irrelevant CPU architecture libs to reduce size

Increased default max heap size from 512MB to 1GB on systems with more than 1GB RAM

Intel CPUs no longer need the awkward glibc version-faking shim to enable inotify support (for real-time backup)

Switched to using root account – no more adding account permissions for backup, package upgrades will no longer break this

DSM Firewall application definition added

Tested with DSM Task Scheduler to allow backups between certain times of day only, saving RAM when not in use

Daemon init script now uses a proper PID file instead of Code42’s unreliable method of using grep on the output of ps

Daemon init script can be run from the command line

Removal of bash binary dependency now Code42’s CrashPlanEngine script is no longer used

Removal of nice binary dependency, using BusyBox equivalent renice

Unified ARMv5 and ARMv7 external binary package (armle)

Added support for Mindspeed Comcerto 2000 CPU (comcerto2k – armhf) in DS414j

Added support for Intel Atom C2538 (avoton) CPU in DS415+

Added support to choose which version of CrashPlan PROe client to download, since some servers may still require legacy versions

Switched to .tar.xz compression for native binaries to reduce web hosting footprint - 0027 20/Mar/14 – Fixed open file handle limit for very large backup sets (ulimit fix)

- 0026 16/Feb/14 – Updated all CrashPlan clients to version 3.6.3, improved handling of Java temp files

- 0025 30/Jan/14 – glibc version shim no longer used on Intel Synology models running DSM 5.0

- 0024 30/Jan/14 – Updated to CrashPlan PROe 3.6.1.4 and added support for PowerPC 2010 Synology models running DSM 5.0

- 0023 30/Jan/14 – Added support for Intel Atom Evansport and Armada XP CPUs in new DSx14 products

- 0022 10/Jun/13 – Updated all CrashPlan client versions to 3.5.3, compiled native binary dependencies to add support for Armada 370 CPU (DS213j), start-stop-status.sh now updates the new javaMemoryHeapMax value in my.service.xml to the value defined in syno_package.vars

- 0021 01/Mar/13 – Updated CrashPlan to version 3.5.2

- 0020 21/Jan/13 – Fixes for DSM 4.2

- 018 Updated CrashPlan PRO to version 3.4.1

- 017 Updated CrashPlan and CrashPlan PROe to version 3.4.1, and improved in-app update handling

- 016 Added support for Freescale QorIQ CPUs in some x13 series Synology models, and installer script now downloads native binaries separately to reduce repo hosting bandwidth, PowerQUICC PowerPC processors in previous Synology generations with older glibc versions are not supported

- 015 Added support for easy scheduling via cron – see updated Notes section

- 014 DSM 4.1 user profile permissions fix

- 013 implemented update handling for future automatic updates from Code 42, and incremented CrashPlanPRO client to release version 3.2.1

- 012 incremented CrashPlanPROe client to release version 3.3

- 011 minor fix to allow a wildcard on the cpio archive name inside the main installer package (to fix CP PROe client since Code 42 Software had amended the cpio file version to 3.2.1.2)

- 010 minor bug fix relating to daemon home directory path

- 009 rewrote the scripts to be even easier to maintain and unified as much as possible with my imminent CrashPlan PROe server package, fixed a timezone bug (tightened regex matching), moved the script-amending logic from installer.sh to start-stop-status.sh with it now applying to all .sh scripts each startup so perhaps updates from Code42 might work in future, if wget fails to fetch the installer from Code42 the installer will look for the file in the public shared folder

- 008 merged the 14 package scripts each (7 for ARM, 7 for Intel) for CP, CP PRO, & CP PROe – 42 scripts in total – down to just two! ARM & Intel are now supported by the same package, Intel synos now have working inotify support (Real-Time Backup) thanks to rwojo’s shim to pass the glibc version check, upgrade process now retains login, cache and log data (no more re-scanning), users can specify a persistent larger max heap size for very large backup sets

- 007 fixed a bug that broke CrashPlan if the Java folder moved (if you changed version)

- 006 installation now fails without User Home service enabled, fixed Daylight Saving Time support, automated replacing the ARM libffi.so symlink which is destroyed by DSM upgrades, stopped assuming the primary storage volume is /volume1, reset ownership on /var/lib/crashplan and the Friends backup location after installs and upgrades

- 005 added warning to restart daemon after 1st run, and improved upgrade process again

- 004 updated to CrashPlan 3.2.1 and improved package upgrade process, forced binding to 0.0.0.0 each startup

- 003 fixed ownership of /volume1/crashplan folder

- 002 updated to CrashPlan 3.2

- 001 30/Jan/12 – intial public release

Thank you seems to work perfect.

I already have 400gb uploaded to my crashplan account. All 400gb is located on my DS209 – but I used crashplan on Mac Pro to upload.

In this way I had to

1. have one computer open to upload files

2. worry about my wifi connection on my mac book. I dont have the best connection all over the house, so when Im in the kitchen I did upload with 400kbs near my router 850kbs.

I say thanks.

I just did adopt my old profile. It seems to work.

I’ll let you know!

Great, thanks for the feedback and glad it’s all worked out. I was going nuts yesterday trying to debug my scripts! Just couldn’t accept defeat :)

The adopting process seems to work great.

Here you have a screendump of the adopt process – seems to take a day or so but at the moment it work on a fair speed – even then I only have a 1mb upload connection.

The missing folders is the “old” folders. The one a the top is the new ones.

http://tinypic.com/r/142v68z/5

I report back when it is done….

I guess it must just be re-checksumming all the files, and then maybe re-encrypting them at the CrashPlan Central end with the new key the NAS is using. How’s the NAS CPU use?

Thanks for the package, loaded up nice and easy and your steps to connect via the desktop client worked perfectly

Hoping you may be able to help with something, I have the package running obviously and everything is peachy there and my NAS is backing up to the Crashplan cloud

What I have then attempted to do is run crashplan on my desktop to backup files to my NAS ie the backup process will work “folder A on desktop”>NAS>Cloud. The desktop client sees the NAS as an available “computer” and I can start the backup but then the client and the NAS client report the following error:

“Destination unavailable – backup location is not accessible”

I have checked that the “crashplan” account has permissions to the backup directory, I have also tried changing to an alternate backup location without success

What is interesting is that the client says that the connection has been up for 45mins, so it seems to definitely be connecting to the NAS, which makes it really seem permission related etc

Any ideas?

Sorry, not really sure. The log which I displayed in the package’s Log tab is actually the activity history. You could also try looking at the two engine logs, which you’ll need to use an SSH session to see. They are:

/volume1/@appstore/CrashPlan/log/engine_output.log

/volume1/@appstore/CrashPlan/log/engine_error.log

I’m also not sure how the backup engine will cope with having a smaller than standard RAM allocation. I use a 256MB system (192MB for Java), and it could be that a 128MB syno (80MB for Java) is just not enough.

I encountered the same error but was able to fix it. Once I created the “crashplan” share and restarted the CrashPaln app on the NAS it auto created the “backupArchives” as documented above.

However, for me when trying to use that as the path for sharing I get the error you got. I simply used the CrashPaln gui to configure the NAS to point to the root of “/volume1/crashplan”. Once I made this change I was able to connect to the NAS from other computers and begin backups with no errors.

I will say my fix may not be the most graceful and can probably be fixed in some other way. I am just happy to have a working solution for me and hope it helps others. Patters I greatly thank you for making this package.

Really pleased that you have created a package! Thanks for all your efforts.

It should make this so much easier to install in future

Hi,

Great to see a package!

What if the Crashplan client gets an upgrade?

Will it be enough to just remove and re-install the package?

Best regards

Jeppe

Yes, that should be all that’s required, then you’d use the Adopt Computer option in the client.

Did any of you guys use the Intel package? I still have no idea if it works…

How often does CrashPlan push a client upgrade and how would we know that this has occurred so that we can go through the re-install + adopt process?

PS – This is awesome! thank you for sharing.

It seems to have been on 3.0.3 for a while now. I think I read somewhere that they might not instigate auto-updates of headless engine installs since they had a lot of support requests to deal with last time they tried that. When a new one comes out, let me know on here and I’ll have to update the package.

Dear,

The engine did not accept my login so I reinstalled it on the crashplan.

It then updated to version 3.15.2012 and stopped.

I cannot make it work again.

Can you help?

By the way thanks for the sharing and instructions, they are great!

Wow, this may be exactly what is tipping me back to buying a Synology. Since you are running the DS111, I guess I can then assume that this will work on the DS411 (since it is supposed to be the same processor, just with more memory). Does anyone know if it will run on the DS411j, despite the memory being less? I thought about getting the DS411+II but then decided it was too expensive for my taste considering I am already going to buy a mac mini server to replace my hackintosh. I was going to buy an external RAID box, but then it becomes convoluted since the choices for RAID boxes aren’t great… and the mac now has a thunderbolt connector which has not yet seen wide adoption.

Anyway… thanks for doing this! I personally think Synology and/or Crashplan should just partner on this… it makes for a killer home solution!

There’s a lot of cool stuff to run on these Synology products now, but RAM is the biggest limiting factor. You often have to choose which packages are active. I’d avoid the J series for that reason. I wish there was a DIMM slot in there, or at the very least some pads we could DIY solder more RAM onto…

I have it running on DS411.

Does disk hibernation still work?

I can’t say I’ve noticed, and I run Serviio which may affect that too, so perhaps someone else can comment.

Got it running on my 1511+

Install instructions were perfect – no issues.

14.9GB on its way to Crashplan right now!

Thanks so much!

Thanks for the comment – now I know the Intel build works :)

I don’t know if there have been any updates to break the critical links, or if I’m doing something very simple wrong.

I have a DS1511+ with DSM up to date (4.2) and I added Java SE for Embedded 6 (1.6.0_38-0017) per your package without incident, but when it comes time to install CrashPlan 3.5.2-0021, it tells me that “a shared folder called ‘public’ could not be found…” Obviously I created the shared folder using the control panel using lower case letters and set every privilege option to read/write (btw: java worked with the download saved there).

I appreciate any help, as this is very frustrating.

— Rob

Re-enable Windows File Sharing on the NAS and it should work.

Thank you for the help and quick reply. I disabled and re-enabled the windows file service from Control Panel…Win/Mac/NFS. Tried it several times with many reboots and no change in CrashPlan install behavior.

— Rob

Unfortunately, I still haven’t had any luck installing your package. I was wondering if you might have any other suggestions. I appreciate any help you could provide. Thank you.

— Rob

Can you post the result of running:

cat /usr/syno/etc/smb.confNAS> cat /usr/syno/etc/smb.conf

http://pastebin.com/E98XFWuy

That looks fine to me. Try running this (which is how my CrashPlan script locates the public folder):

cat /usr/syno/etc/smb.conf | sed -r '/\/public$/!d;s/^.*path=(\/volume[0-9]{1,4}\/public).*$/\1/'http://pastebin.com/dc28rwSS

It doesn’t appear to do anything. No error report, no response.

I’ve seen similar behavior trying to install other packages. I’ll get to a certain point in a tutorial, and some steps just don’t seem to have any effect.

Thanks again for your patience. There’s probably something simple, but I’m too much of a linux noob to really troubleshoot the problem. I guess I only know enough to get myself in trouble. So far, my failure record has included. 1.) Manual install of CrashPlan, 2.) compile/install TVHeadend 3.4, 3.) compile install HDHomeRun drivers, and 4.) trying to set up a sqlserver to host a common XBMC library database for several clients.

Take care.

Hmm. There’s definitely something different about your NAS – can you try that same command but swap sed for /bin/sed

swapping “sed” with “/bin/sed” returns:

/volume1/public

My advice would be to undo the bootstrap you performed when you tried to manually install CrashPlan, since its tools like sed obviously don’t work in quite the same way as the system default ones. You can do that be running the bootstrap script again – it will offer an uninstall option:

http://forum.synology.com/wiki/index.php/Overview_on_modifying_the_Synology_Server,_bootstrap,_ipkg_etc#How_to_install_ipkg

Ok, So… I tried that, and upon executing the bootstrap script, it returned: “cd: line 5: can’t cd to bootstrap”

http://pastebin.com/JhAyvyay

BTW: My /root/.public file has the “PATH=…” and “export PATH” lines commented out. Could that be a reason it can’t execute system commands?

Thanks

Highly likely yes. Can you pastebin your /root/.profile ?

http://pastebin.com/pUtm3we6

Ok – uncomment the ‘PATH=’ and ‘export PATH’ lines at the top. Restart your NAS, then uninstall and reinstall the Java package. That should fix the missing timezone part at the bottom (‘TZ=’). Then you should be ok.

Success!

Here’s what worked…

– I un-commented the “Path” and “export Path” lines in /root/.profile and /etc/profile .

— That allowed your search command to work in ssh, but the install package still couldn’t find the public folder.

— It also allowed me to uninstall the Bootstrap which I couldn’t previously do.

– I then uninstalled java SE, but when I went to reinstall it told me that it was still installed at /volume1/@appstore/java6 . Of course that folder didn’t exist, but I found that this path was being called out in the two profile files

— So, I commented out the java lines in those files, and got java to reinstall

– Lastly, your package worked perfectly, as designed, at last.

Thanks again for all your help. You’ve been incredibly patient.

@patters: Do you think you can make your packages available in spksrc ? https://github.com/SynoCommunity/spksrc

I understand that what you’re doing is mostly packaging and not cross compilation but spksrc aims to provide a unified way to build SPKs as well as SPK’s source code versioning.

Also, how have you managed to read all the licensing stuff of Java? Is that allowed by this license to distribute java SPKs?

Hi, I’ll take a look at that but it looks like a significant time investment to re-do everything so I don’t think it’s likely I’m afraid.

For Java, there shouldn’t be any licensing issue because I’m not distributing a single byte of the JRE, only scripts to install it. The user has to provide it, and to do so they must independently agree to the Oracle licence themselves.

Ok nevermind, I think i’ll just look in your code and see what I can grab from there that would fit in spksrc (and put credits of course)

In case you make other SPKs, please consider using spksrc for that. This is a very easy to use framework.

For example to add a package : https://github.com/SynoCommunity/spksrc/commit/198120d9f433ebe9482056a5160caaadd5a4d099 (PLIST being generated automatically but yet require some manual little changes)

To compile it just “cd cross/lame && make ARCH=88f6281” (everything is handle automatically, from toolchain download to built binary)

And a sample commit that adds a SPK : https://github.com/SynoCommunity/spksrc/commit/74febca4a171ea772f4823df518682fe768b7500

“cd spk/mpd && make ARCH=88f6281” build automatically an installable SPK

Don’t hesitate to contact me (email or GitHub) in case you wish to contribute :)

Thanks. Can I ask that you please don’t make alternate versions of the packages I’ve already done, unless of course I give up maintaining them in future, as that would get pretty confusing for people. As you can see, I’ve tended to focus on Java apps. It makes sense to keep these on the same repo as the Java spks themselves.

Agree, why change any thing. This work. I cant be more simple? You add patters url to DSM and then the installation is just one click away.

So, nice :)

And thanks

Great work Patters! I wish I had your skills… :)

Thanks for the guide – I’ve followed it and crashplan is running OK on my ds110j. My problem is I cannot connect to it using the desktop client on another machine. From your notes you should simply be able to change the IP address in the ui.config file – is this all you did ? Did you have to setup the ssh tunnel too ?

When I do that and start the desktop client – it never manages to connect to the sevice on the syno. I’ve validated that the service on the syno is listening on the default port.

Anything I’ve missed ?

Did you stop and restart the package at least once?

Hi patters, yes I did and tried rebooting too. I’m still confused re the ssh tunneling thing, do I need to do that to connect the desktop client to syno service to be able to configure it ? Or should I simply be able to change the IP address as per your guide ?

The tunnelling thing is unnecessarily complicated if you’re on the same network as the syno. You should simply be able to make the edit to that ui.properties file on the client. It is possible though, that the 80MB RAM allocation that is necessary on the J series NAS is just not enough (CrashPlan is designed to use 512MB). Perhaps someone else can comment if they have it running on a J series.

Well, got it working finally, but I still had to use the ssh tunnelling method.

Without the ssh tunnel, this is what happens when I test with telnet using Win 7 64 pc (where desktop client installed):

telnet ipadd-of-syno 4242 gives me “connection refused”

telnet ipadd-of-syno 4243 I do get a connection but with loads of strange chars of which I can make out only DHPublicKeyMessage

Would be nice to get it working without the ssh tunnel :-)

Not sure, other people don’t seem to have this issue, so does your PC run some kind of personal firewall?

Patters,

Does your package address the issue with non-US ASCII characters in the file structure – as noted in the Syno Wiki?

http://forum.synology.com/wiki/index.php/CrashPlan_Headless_Client

Thanks

Yep, that was all taken care of in my Java package right from the beginning. That’s why you have to fetch the syno toolchain for it.

Thanks for making this, looks like a great offsite/recovery solution. Plan to install it tonight on a DS2411+.

My Synology DS211J runs actually crashplan.

About 60MB of RAM and 70% of CPU charge for the whole NAS.

In crashplan, CPU usage is set to 70% when NAS is idle otherwise 10%.

How about a calibre package for DSM?

Patters,

If I wanted to uninstall the crashplan that I have on my DS (installed manually via wiki instructions), and use your package instead – do you know how I would do that? I followed the steps not really knowing Linux or what I was doing, and I’m not sure how to remove it cleanly.

Thanks if you can provide any help.

Sure. Here’s the recipe for that:

cd /tmp #we need to download the installer bundle to get the uninstall script DL_FOLDER=http://download.crashplan.com/installs/linux/install/CrashPlan DL_FILE=CrashPlan_3.0.3_Linux.tgz wget "${DL_FOLDER}/${DL_FILE}" gunzip "${DL_FILE}" tar xvf CrashPlan_3.0.3_Linux.tar rm CrashPlan_3.0.3_Linux.tar cd CrashPlan-install #move any friends' backup data to where the package will find it [ -d /volume1/crashplan ] || mkdir /volume1/crashplan [ -d /opt/crashplan/backupArchives ] && mv /opt/crashplan/backupArchives /volume1/crashplan #fix up the uninstall script so it actually runs sed -i "s%^#!/bin/bash%#!/opt/bin/bash%" uninstall.sh ./uninstall.sh -i /opt/crashplan cd /tmp rm -r CrashPlan-install [ -e /usr/syno/etc/rc.d/S99crashplan.sh ] && rm /usr/syno/etc/rc.d/S99crashplan.sh [ -e /etc/init.d/crashplan ] && rm /etc/init.d/crashplanPatters,

That worked a treat. Thanks for walking me through that. The adoption process went seamless as well! All I had to do was chown my old friend archives.

Thanks again for the hard work.

Thanks for all the hard work! When I try to run this script to start clean, I get to./uninstall.sh -i /opt/crashplan and I get the following error:

who: invalid option — r

BusyBox v1.16.1 (2012-03-07 15:47:21 CST) multi-call binary.

Usage: who [-a]

Show who is logged on

Options:

-a show all

ERROR: cpio not found and is required for uninstall. Exiting

Any ideas?

Run this first, then try again:

export PATH=/opt/bin:/opt/sbin:$PATHThere are some other steps required though. Search this page from the top for the word “recipe”. I wish the author of that Synology wiki would (a) allow people to contribute, (b) demonstrate how to undo the steps, and (c) mention my package so people can make an informed choice before they set about making complex changes to their syno!

Hi patters,

I have uninstalled my crashplan via your recipe, it works perfectly!

I just faced an issue on moving the friends’ backup data.

All my computers backup to Synology, and it was like this for more than 6 months already, so you can imagine the amount of data I had on the /opt/crashplan/backupArchives.

It was huge!

And the mv command did not work since it first copies all the data to the destination, and only after that it deletes the source. In my case you can imagine what happend… I did not have the enough

space for duplicating all the data.

So I started hunting a way to do that in a more “clever” way, and I found out that the rsync command is much better for that.

So I used the following to move the data, and I suggest everyone to do the same, since not only moves and deletes, but also keeps all the file permissions, properties (including dates)

rsync -av –remove-source-files –ignore-existing –stats –progress /opt/crashplan/backupArchives/ /volume1/crashplan/backupArchives

Thanks,

Flavio Endo

So i’ve just copied and pasted the exact commands here minus the #tags and I’m getting -ash: ./uninstall.sh: not found

any ideas?

That was written a long time ago. I don’t really know you may have installed CrashPlan manually, or even which version. I would ask the question on whatever guide you followed.

Damm, nice work. If you are looking for another challenge you might take a look at making a package for siriserver: http://www.ijailbreak.com/cydia/how-to-setup-siriserver-on-ubuntu/

Since the popularity of idevices that could give u the attention you deserve.

On my DS209 the keeps reseting to 127.0.0.1. Do you have any ideea why ?

On my DS209 the serviceHost keeps reseting to 127.0.0.1. Do you have any ideea why ?

I use CrashPlan on a x86 gentoo linux server and it works. On DS209 it seems like CrashPlan is overwriting my.service.xml when it starts.

Did you copy an existing config file over or anything like that? If you did you’d have to remember to reset ownership for the crashplan user:

chown -R crashplan /volume1/@appstore/CrashPlanTake a look at the engine log files I mentioned in the last point of the Notes section of the post, they’re normally pretty explicit if there’s a problem.

Trying to install Crashplan on DS212. I have downloaded and installed the package and it tells me that the service is running. Also amended the ui.config file on the client to point to the IP address of the NAS.

When I try to connect, it tells me “Unable to connect to the backup engine, retry?”

I have stopped and restarted the service and even rebooted the NAS, but to no avail. Has anyone else managed to get it to work on this model?

Any help or advice would be greatly appreciated.

Can you look at the file /volume1/@appstore/CrashPlan/conf/my.service.xml and check the value inside the tag? It should be 0.0.0.0 but someone else on here reported that under certain circumstances it’s being reset to 127.0.0.1 (which would prevent you connecting from another computer). You’ll need to connect via SSH to do this – I recommend copying the file to your public share then viewing it on your computer if you’re unfamiliar with Linux:

cp /volume1/@appstore/CrashPlan/conf/my.service.xml /volume1/publicThank you for the reply- you were correct in that servicehost was set to 127.0.0.1. I set it to 0.0.0.0 and copied the file back, but still no joy I’m afraid. There are no messages in the service log, apart from the one that says the service was started.

Any more ideas?

Take another look at the xml – CrashPlan may have set it back to how it was. Failing that, try connecting using the SSH tunnel method.

Rechecked the xml file and it was still OK, so tried the SSH tunnel method and bingo – connected perfectly!!!

Music and pics on their way to CrashPlan as I write this!!!

Thank you so much for your help. It is very much appreciated!!!!

New problem – after rebooting the NAS or stopping or restarting the service, the backup does not restart. It seems to loose all of the configuration.

When connecting the client, I am presented with the setup screen to either enter my name and email or login with my Crashplan account ID. After doing so, all the settings have been reset and I have to “adopt” my NAS in order for the backup to start.

Is there a way around this? Sorry, but I am a complete Linux novice, so no idea where to start!

Solved!!!

After reading the later posts, I tried enabling the User Home Service, as suggested. After that, restart the CrashPlan service, reconfigure the backup in the client program.

Subsequently, restarting the service or NAS automatically starts the backup again!!

Just wanted to pop on and say thanks. The package works with a few small hiccups during install (had to download JavaSE and GCC myself).

Otherwise it looks like it’s doing its business. Thanks :)

No probs – that’s by design though, I can’t redistribute the Java Runtime.

Another one with problems with the connection from a remote client…

I changed the my.service.xml manually to 0.0.0.0, and every time I start the service or restart the synology it is changed automatically to 127.0.0.1 again. If I go to /volume1/@appstore/CrashPlan/bin and execute ./CrashPlanEngine start it works!! I think it is a problem with the package, not with the binaries. I can observe in the engine_output.log this:

[02.04.12 15:12:53.330 INFO main com.backup42.service.CPService.main ] *************************************************************[02.04.12 15:12:53.330 INFO main com.backup42.service.CPService.main ] *************************************************************

[02.04.12 15:12:53.331 INFO main com.backup42.service.CPService.main ] STARTED CrashPlanService

[02.04.12 15:12:53.348 INFO main com.backup42.service.CPService.main ] CPVERSION = 3.0.3 - 1300223300091 (2011-03-15T21:08:20:091+0000)

[02.04.12 15:12:53.348 INFO main com.backup42.service.CPService.main ] LOCALE = English

[02.04.12 15:12:53.351 INFO main com.backup42.service.CPService.main ] ARGS = [ ]

[02.04.12 15:12:53.351 INFO main com.backup42.service.CPService.main ] *************************************************************

[02.04.12 15:12:53.638 INFO main com.backup42.service.CPService.start ] Adding shutdown hook.

[02.04.12 15:12:53.642 INFO main om.backup42.service.CPService.copyCustom] BEGIN Copy Custom, waitForCustom=false

[02.04.12 15:12:53.642 INFO main om.backup42.service.CPService.copyCustom] NOT waiting for custom skin to appear in custom or .Custom