UPDATE – CrashPlan For Home (green branding) was retired by Code 42 Software on 22/08/2017. See migration notes below to find out how to transfer to CrashPlan for Small Business on Synology at the special discounted rate.

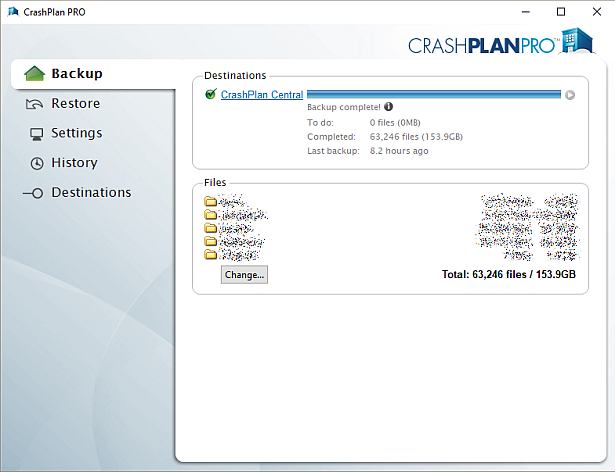

CrashPlan is a popular online backup solution which supports continuous syncing. With this your NAS can become even more resilient, particularly against the threat of ransomware.

There are now only two product versions:

- Small Business: CrashPlan PRO (blue branding). Unlimited cloud backup subscription, $10 per device per month. Reporting via Admin Console. No peer-to-peer backups

- Enterprise: CrashPlan PROe (black branding). Cloud backup subscription typically billed by storage usage, also available from third parties.

The instructions and notes on this page apply to both versions of the Synology package.

CrashPlan is a Java application which can be difficult to install on a NAS. Way back in January 2012 I decided to simplify it into a Synology package, since I had already created several others. It has been through many versions since that time, as the changelog below shows. Although it used to work on Synology products with ARM and PowerPC CPUs, it unfortunately became Intel-only in October 2016 due to Code 42 Software adding a reliance on some proprietary libraries.

Licence compliance is another challenge – Code 42’s EULA prohibits redistribution. I had to make the Synology package use the regular CrashPlan for Linux download (after the end user agrees to the Code 42 EULA). I then had to write my own script to extract this archive and mimic the Code 42 installer behaviour, but without the interactive prompts of the original.

Synology Package Installation

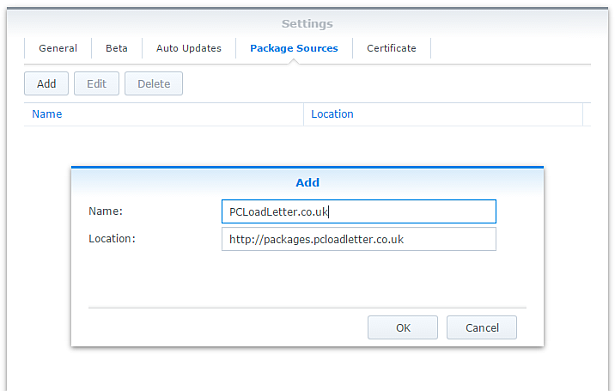

- In Synology DSM’s Package Center, click Settings and add my package repository:

- The repository will push its certificate automatically to the NAS, which is used to validate package integrity. Set the Trust Level to Synology Inc. and trusted publishers:

- Now browse the Community section in Package Center to install CrashPlan:

The repository only displays packages which are compatible with your specific model of NAS. If you don’t see CrashPlan in the list, then either your NAS model or your DSM version are not supported at this time. DSM 5.0 is the minimum supported version for this package, and an Intel CPU is required. - Since CrashPlan is a Java application, it needs a Java Runtime Environment (JRE) to function. It is recommended that you select to have the package install a dedicated Java 8 runtime. For licensing reasons I cannot include Java with this package, so you will need to agree to the licence terms and download it yourself from Oracle’s website. The package expects to find this .tar.gz file in a shared folder called ‘public’. If you go ahead and try to install the package without it, the error message will indicate precisely which Java file you need for your system type, and it will provide a TinyURL link to the appropriate Oracle download page.

- To install CrashPlan PRO you will first need to log into the Admin Console and download the Linux App from the App Download section and also place this in the ‘public’ shared folder on your NAS.

- If you have a multi-bay NAS, use the Shared Folder control panel to create the shared folder called public (it must be all lower case). On single bay models this is created by default. Assign it with Read/Write privileges for everyone.

- If you have trouble getting the Java or CrashPlan PRO app files recognised by this package, try downloading them with Firefox. It seems to be the only web browser that doesn’t try to uncompress the files, or rename them without warning. I also suggest that you leave the Java file and the public folder present once you have installed the package, so that you won’t need to fetch this again to install future updates to the CrashPlan package.

- CrashPlan is installed in headless mode – backup engine only. This will configured by a desktop client, but operates independently of it.

- The first time you start the CrashPlan package you will need to stop it and restart it before you can connect the client. This is because a config file that is only created on first run needs to be edited by one of my scripts. The engine is then configured to listen on all interfaces on the default port 4243.

CrashPlan Client Installation

- Once the CrashPlan engine is running on the NAS, you can manage it by installing CrashPlan on another computer, and by configuring it to connect to the NAS instance of the CrashPlan Engine.

- Make sure that you install the version of the CrashPlan client that matches the version running on the NAS. If the NAS version gets upgraded later, you will need to update your client computer too.

- The Linux CrashPlan PRO client must be downloaded from the Admin Console and placed in the ‘public’ folder on your NAS in order to successfully install the Synology package.

- By default the client is configured to connect to the CrashPlan engine running on the local computer. Run this command on your NAS from an SSH session:

echo `cat /var/lib/crashplan/.ui_info`

Note those are backticks not quotes. This will give you a port number (4243), followed by an authentication token, followed by the IP binding (0.0.0.0 means the server is listening for connections on all interfaces) e.g.:

4243,9ac9b642-ba26-4578-b705-124c6efc920b,0.0.0.0

port,--------------token-----------------,binding

Copy this token value and use this value to replace the token in the equivalent config file on the computer that you would like to run the CrashPlan client on – located here:

C:\ProgramData\CrashPlan\.ui_info (Windows)

“/Library/Application Support/CrashPlan/.ui_info” (Mac OS X installed for all users)

“~/Library/Application Support/CrashPlan/.ui_info” (Mac OS X installed for single user)

/var/lib/crashplan/.ui_info (Linux)

You will not be able to connect the client unless the client token matches on the NAS token. On the client you also need to amend the IP address value after the token to match the Synology NAS IP address.

so using the example above, your computer’s CrashPlan client config file would be edited to:

4243,9ac9b642-ba26-4578-b705-124c6efc920b,192.168.1.100

assuming that the Synology NAS has the IP 192.168.1.100

If it still won’t connect, check that the ServicePort value is set to 4243 in the following files:

C:\ProgramData\CrashPlan\conf\ui_(username).properties (Windows)

“/Library/Application Support/CrashPlan/ui.properties” (Mac OS X installed for all users)

“~/Library/Application Support/CrashPlan/ui.properties” (Mac OS X installed for single user)

/usr/local/crashplan/conf (Linux)

/var/lib/crashplan/.ui_info (Synology) – this value does change spontaneously if there’s a port conflict e.g. you started two versions of the package concurrently (CrashPlan and CrashPlan PRO) - As a result of the nightmarish complexity of recent product changes Code42 has now published a support article with more detail on running headless systems including config file locations on all supported operating systems, and for ‘all users’ versus single user installs etc.

- You should disable the CrashPlan service on your computer if you intend only to use the client. In Windows, open the Services section in Computer Management and stop the CrashPlan Backup Service. In the service Properties set the Startup Type to Manual. You can also disable the CrashPlan System Tray notification application by removing it from Task Manager > More Details > Start-up Tab (Windows 8/Windows 10) or the All Users Startup Start Menu folder (Windows 7).

To accomplish the same on Mac OS X, run the following commands one by one:sudo launchctl unload /Library/LaunchDaemons/com.crashplan.engine.plist sudo mv /Library/LaunchDaemons/com.crashplan.engine.plist /Library/LaunchDaemons/com.crashplan.engine.plist.bak

The CrashPlan menu bar application can be disabled in System Preferences > Users & Groups > Current User > Login Items

Migration from CrashPlan For Home to CrashPlan For Small Business (CrashPlan PRO)

- Leave the regular green branded CrashPlan 4.8.3 Synology package installed.

- Go through the online migration using the link in the email notification you received from Code 42 on 22/08/2017. This seems to trigger the CrashPlan client to begin an update to 4.9 which will fail. It will also migrate your account onto a CrashPlan PRO server. The web page is likely to stall on the Migrating step, but no matter. The process is meant to take you to the store but it seems to be quite flakey. If you see the store page with a $0.00 amount in the basket, this has correctly referred you for the introductory offer. Apparently the $9.99 price thereafter shown on that screen is a mistake and the correct price of $2.50 is shown on a later screen in the process I think. Enter your credit card details and check out if you can. If not, continue.

- Log into the CrashPlan PRO Admin Console as per these instructions, and download the CrashPlan PRO 4.9 client for Linux, and the 4.9 client for your remote console computer. Ignore the red message in the bottom left of the Admin Console about registering, and do not sign up for the free trial. Preferably use Firefox for the Linux version download – most of the other web browsers will try to unpack the .tgz archive, which you do not want to happen.

- Configure the CrashPlan PRO 4.9 client on your computer to connect to your Syno as per the usual instructions on this blog post.

- Put the downloaded Linux CrashPlan PRO 4.9 client .tgz file in the ‘public’ shared folder on your NAS. The package will no longer download this automatically as it did in previous versions.

- From the Community section of DSM Package Center, install the CrashPlan PRO 4.9 package concurrently with your existing CrashPlan 4.8.3 Syno package.

- This will stop the CrashPlan package and automatically import its configuration. Notice that it will also backup your old CrashPlan .identity file and leave it in the ‘public’ shared folder, just in case something goes wrong.

- Start the CrashPlan PRO Synology package, and connect your CrashPlan PRO console from your computer.

- You should see your protected folders as usual. At first mine reported something like “insufficient device licences”, but the next time I started up it changed to “subscription expired”.

- Uninstall the CrashPlan 4.8.3 Synology package, this is no longer required.

- At this point if the store referral didn’t work in the second step, you need to sign into the Admin Console. While signed in, navigate to this link which I was given by Code 42 support. If it works, you should see a store page with some blue font text and a $0.00 basket value. If it didn’t work you will get bounced to the Consumer Next Steps webpage: “Important Changes to CrashPlan for Home” – the one with the video of the CEO explaining the situation. I had to do this a few times before it worked. Once the store referral link worked and I had confirmed my payment details my CrashPlan PRO client immediately started working. Enjoy!

Notes

- The package uses the intact CrashPlan installer directly from Code 42 Software, following acceptance of its EULA. I am complying with the directive that no one redistributes it.

- The engine daemon script checks the amount of system RAM and scales the Java heap size appropriately (up to the default maximum of 512MB). This can be overridden in a persistent way if you are backing up large backup sets by editing /var/packages/CrashPlan/target/syno_package.vars. If you are considering buying a NAS purely to use CrashPlan and intend to back up more than a few hundred GB then I strongly advise buying one of the models with upgradeable RAM. Memory is very limited on the cheaper models. I have found that a 512MB heap was insufficient to back up more than 2TB of files on a Windows server and that was the situation many years ago. It kept restarting the backup engine every few minutes until I increased the heap to 1024MB. Many users of the package have found that they have to increase the heap size or CrashPlan will halt its activity. This can be mitigated by dividing your backup into several smaller backup sets which are scheduled to be protected at different times. Note that from package version 0041, using the dedicated JRE on a 64bit Intel NAS will allow a heap size greater than 4GB since the JRE is 64bit (requires DSM 6.0 in most cases).

- If you need to manage CrashPlan from a remote location, I suggest you do so using SSH tunnelling as per this support document.

- The package supports upgrading to future versions while preserving the machine identity, logs, login details, and cache. Upgrades can now take place without requiring a login from the client afterwards.

- If you remove the package completely and re-install it later, you can re-attach to previous backups. When you log in to the Desktop Client with your existing account after a re-install, you can select “adopt computer” to merge the records, and preserve your existing backups. I haven’t tested whether this also re-attaches links to friends’ CrashPlan computers and backup sets, though the latter does seem possible in the Friends section of the GUI. It’s probably a good idea to test that this survives a package reinstall before you start relying on it. Sometimes, particularly with CrashPlan PRO I think, the adopt option is not offered. In this case you can log into CrashPlan Central and retrieve your computer’s GUID. On the CrashPlan client, double-click on the logo in the top right and you’ll enter a command line mode. You can use the GUID command to change the system’s GUID to the one you just retrieved from your account.

- The log which is displayed in the package’s Log tab is actually the activity history. If you are trying to troubleshoot an issue you will need to use an SSH session to inspect these log files:

/var/packages/CrashPlan/target/log/engine_output.log

/var/packages/CrashPlan/target/log/engine_error.log

/var/packages/CrashPlan/target/log/app.log - When CrashPlan downloads and attempts to run an automatic update, the script will most likely fail and stop the package. This is typically caused by syntax differences with the Synology versions of certain Linux shell commands (like rm, mv, or ps). The startup script will attempt to apply the published upgrade the next time the package is started.

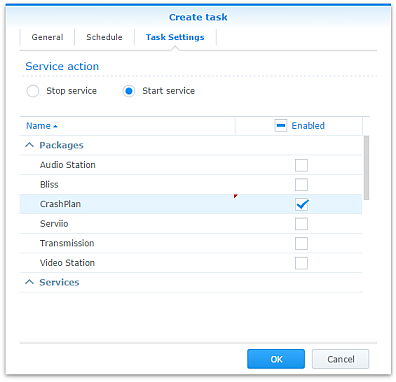

- Although CrashPlan’s activity can be scheduled within the application, in order to save RAM some users may wish to restrict running the CrashPlan engine to specific times of day using the Task Scheduler in DSM Control Panel:

Note that regardless of real-time backup, by default CrashPlan will scan the whole backup selection for changes at 3:00am. Include this time within your Task Scheduler time window or else CrashPlan will not capture file changes which occurred while it was inactive:

- If you decide to sign up for one of CrashPlan’s paid backup services as a result of my work on this, please consider donating using the PayPal button on the right of this page.

Package scripts

For information, here are the package scripts so you can see what it’s going to do. You can get more information about how packages work by reading the Synology 3rd Party Developer Guide.

installer.sh

#!/bin/sh

#--------CRASHPLAN installer script

#--------package maintained at pcloadletter.co.uk

DOWNLOAD_PATH="http://download2.code42.com/installs/linux/install/${SYNOPKG_PKGNAME}"

CP_EXTRACTED_FOLDER="crashplan-install"

OLD_JNA_NEEDED="false"

[ "${SYNOPKG_PKGNAME}" == "CrashPlan" ] && DOWNLOAD_FILE="CrashPlan_4.8.3_Linux.tgz"

[ "${SYNOPKG_PKGNAME}" == "CrashPlanPRO" ] && DOWNLOAD_FILE="CrashPlanPRO_4.*_Linux.tgz"

if [ "${SYNOPKG_PKGNAME}" == "CrashPlanPROe" ]; then

CP_EXTRACTED_FOLDER="${SYNOPKG_PKGNAME}-install"

OLD_JNA_NEEDED="true"

[ "${WIZARD_VER_483}" == "true" ] && { CPPROE_VER="4.8.3"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_480}" == "true" ] && { CPPROE_VER="4.8.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_470}" == "true" ] && { CPPROE_VER="4.7.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_460}" == "true" ] && { CPPROE_VER="4.6.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_452}" == "true" ] && { CPPROE_VER="4.5.2"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_450}" == "true" ] && { CPPROE_VER="4.5.0"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_441}" == "true" ] && { CPPROE_VER="4.4.1"; CP_EXTRACTED_FOLDER="crashplan-install"; OLD_JNA_NEEDED="false"; }

[ "${WIZARD_VER_430}" == "true" ] && CPPROE_VER="4.3.0"

[ "${WIZARD_VER_420}" == "true" ] && CPPROE_VER="4.2.0"

[ "${WIZARD_VER_370}" == "true" ] && CPPROE_VER="3.7.0"

[ "${WIZARD_VER_364}" == "true" ] && CPPROE_VER="3.6.4"

[ "${WIZARD_VER_363}" == "true" ] && CPPROE_VER="3.6.3"

[ "${WIZARD_VER_3614}" == "true" ] && CPPROE_VER="3.6.1.4"

[ "${WIZARD_VER_353}" == "true" ] && CPPROE_VER="3.5.3"

[ "${WIZARD_VER_341}" == "true" ] && CPPROE_VER="3.4.1"

[ "${WIZARD_VER_33}" == "true" ] && CPPROE_VER="3.3"

DOWNLOAD_FILE="CrashPlanPROe_${CPPROE_VER}_Linux.tgz"

fi

DOWNLOAD_URL="${DOWNLOAD_PATH}/${DOWNLOAD_FILE}"

CPI_FILE="${SYNOPKG_PKGNAME}_*.cpi"

OPTDIR="${SYNOPKG_PKGDEST}"

VARS_FILE="${OPTDIR}/install.vars"

SYNO_CPU_ARCH="`uname -m`"

[ "${SYNO_CPU_ARCH}" == "x86_64" ] && SYNO_CPU_ARCH="i686"

[ "${SYNO_CPU_ARCH}" == "armv5tel" ] && SYNO_CPU_ARCH="armel"

[ "${SYNOPKG_DSM_ARCH}" == "armada375" ] && SYNO_CPU_ARCH="armv7l"

[ "${SYNOPKG_DSM_ARCH}" == "armada38x" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "comcerto2k" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "alpine" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "alpine4k" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "monaco" ] && SYNO_CPU_ARCH="armhf"

[ "${SYNOPKG_DSM_ARCH}" == "rtd1296" ] && SYNO_CPU_ARCH="armhf"

NATIVE_BINS_URL="http://packages.pcloadletter.co.uk/downloads/crashplan-native-${SYNO_CPU_ARCH}.tar.xz"

NATIVE_BINS_FILE="`echo ${NATIVE_BINS_URL} | sed -r "s%^.*/(.*)%\1%"`"

OLD_JNA_URL="http://packages.pcloadletter.co.uk/downloads/crashplan-native-old-${SYNO_CPU_ARCH}.tar.xz"

OLD_JNA_FILE="`echo ${OLD_JNA_URL} | sed -r "s%^.*/(.*)%\1%"`"

INSTALL_FILES="${DOWNLOAD_URL} ${NATIVE_BINS_URL}"

[ "${OLD_JNA_NEEDED}" == "true" ] && INSTALL_FILES="${INSTALL_FILES} ${OLD_JNA_URL}"

TEMP_FOLDER="`find / -maxdepth 2 -path '/volume?/@tmp' | head -n 1`"

#the Manifest folder is where friends' backup data is stored

#we set it outside the app folder so it persists after a package uninstall

MANIFEST_FOLDER="/`echo $TEMP_FOLDER | cut -f2 -d'/'`/crashplan"

LOG_FILE="${SYNOPKG_PKGDEST}/log/history.log.0"

UPGRADE_FILES="syno_package.vars conf/my.service.xml conf/service.login conf/service.model"

UPGRADE_FOLDERS="log cache"

PUBLIC_FOLDER="`synoshare --get public | sed -r "/Path/!d;s/^.*\[(.*)\].*$/\1/"`"

#dedicated JRE section

if [ "${WIZARD_JRE_CP}" == "true" ]; then

DOWNLOAD_URL="http://tinyurl.com/javaembed"

EXTRACTED_FOLDER="ejdk1.8.0_151"

#detect systems capable of running 64bit JRE which can address more than 4GB of RAM

[ "${SYNOPKG_DSM_ARCH}" == "x64" ] && SYNO_CPU_ARCH="x64"

[ "`uname -m`" == "x86_64" ] && [ ${SYNOPKG_DSM_VERSION_MAJOR} -ge 6 ] && SYNO_CPU_ARCH="x64"

if [ "${SYNO_CPU_ARCH}" == "armel" ]; then

JAVA_BINARY="ejdk-8u151-linux-arm-sflt.tar.gz"

JAVA_BUILD="ARMv5/ARMv6/ARMv7 Linux - SoftFP ABI, Little Endian 2"

elif [ "${SYNO_CPU_ARCH}" == "armv7l" ]; then

JAVA_BINARY="ejdk-8u151-linux-arm-sflt.tar.gz"

JAVA_BUILD="ARMv5/ARMv6/ARMv7 Linux - SoftFP ABI, Little Endian 2"

elif [ "${SYNO_CPU_ARCH}" == "armhf" ]; then

JAVA_BINARY="ejdk-8u151-linux-armv6-vfp-hflt.tar.gz"

JAVA_BUILD="ARMv6/ARMv7 Linux - VFP, HardFP ABI, Little Endian 1"

elif [ "${SYNO_CPU_ARCH}" == "ppc" ]; then

#Oracle have discontinued Java 8 for PowerPC after update 6

JAVA_BINARY="ejdk-8u6-fcs-b23-linux-ppc-e500v2-12_jun_2014.tar.gz"

JAVA_BUILD="Power Architecture Linux - Headless - e500v2 with double-precision SPE Floating Point Unit"

EXTRACTED_FOLDER="ejdk1.8.0_06"

DOWNLOAD_URL="http://tinyurl.com/java8ppc"

elif [ "${SYNO_CPU_ARCH}" == "i686" ]; then

JAVA_BINARY="ejdk-8u151-linux-i586.tar.gz"

JAVA_BUILD="x86 Linux Small Footprint - Headless"

elif [ "${SYNO_CPU_ARCH}" == "x64" ]; then

JAVA_BINARY="jre-8u151-linux-x64.tar.gz"

JAVA_BUILD="Linux x64"

EXTRACTED_FOLDER="jre1.8.0_151"

DOWNLOAD_URL="http://tinyurl.com/java8x64"

fi

fi

JAVA_BINARY=`echo ${JAVA_BINARY} | cut -f1 -d'.'`

source /etc/profile

pre_checks ()

{

#These checks are called from preinst and from preupgrade functions to prevent failures resulting in a partially upgraded package

if [ "${WIZARD_JRE_CP}" == "true" ]; then

synoshare -get public > /dev/null || (

echo "A shared folder called 'public' could not be found - note this name is case-sensitive. " >> $SYNOPKG_TEMP_LOGFILE

echo "Please create this using the Shared Folder DSM Control Panel and try again." >> $SYNOPKG_TEMP_LOGFILE

exit 1

)

JAVA_BINARY_FOUND=

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.gz ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.tar ] && JAVA_BINARY_FOUND=true

[ -f ${PUBLIC_FOLDER}/${JAVA_BINARY}.gz ] && JAVA_BINARY_FOUND=true

if [ -z ${JAVA_BINARY_FOUND} ]; then

echo "Java binary bundle not found. " >> $SYNOPKG_TEMP_LOGFILE

echo "I was expecting the file ${PUBLIC_FOLDER}/${JAVA_BINARY}.tar.gz. " >> $SYNOPKG_TEMP_LOGFILE

echo "Please agree to the Oracle licence at ${DOWNLOAD_URL}, then download the '${JAVA_BUILD}' package" >> $SYNOPKG_TEMP_LOGFILE

echo "and place it in the 'public' shared folder on your NAS. This download cannot be automated even if " >> $SYNOPKG_TEMP_LOGFILE

echo "displaying a package EULA could potentially cover the legal aspect, because files hosted on Oracle's " >> $SYNOPKG_TEMP_LOGFILE

echo "server are protected by a session cookie requiring a JavaScript enabled browser." >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

else

if [ -z ${JAVA_HOME} ]; then

echo "Java is not installed or not properly configured. JAVA_HOME is not defined. " >> $SYNOPKG_TEMP_LOGFILE

echo "Download and install the Java Synology package from http://wp.me/pVshC-z5" >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

if [ ! -f ${JAVA_HOME}/bin/java ]; then

echo "Java is not installed or not properly configured. The Java binary could not be located. " >> $SYNOPKG_TEMP_LOGFILE

echo "Download and install the Java Synology package from http://wp.me/pVshC-z5" >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

if [ "${WIZARD_JRE_SYS}" == "true" ]; then

JAVA_VER=`java -version 2>&1 | sed -r "/^.* version/!d;s/^.* version \"[0-9]\.([0-9]).*$/\1/"`

if [ ${JAVA_VER} -lt 8 ]; then

echo "This version of CrashPlan requires Java 8 or newer. Please update your Java package. "

exit 1

fi

fi

fi

}

preinst ()

{

pre_checks

cd ${TEMP_FOLDER}

for WGET_URL in ${INSTALL_FILES}

do

WGET_FILENAME="`echo ${WGET_URL} | sed -r "s%^.*/(.*)%\1%"`"

[ -f ${TEMP_FOLDER}/${WGET_FILENAME} ] && rm ${TEMP_FOLDER}/${WGET_FILENAME}

wget ${WGET_URL}

if [[ $? != 0 ]]; then

if [ -d ${PUBLIC_FOLDER} ] && [ -f ${PUBLIC_FOLDER}/${WGET_FILENAME} ]; then

cp ${PUBLIC_FOLDER}/${WGET_FILENAME} ${TEMP_FOLDER}

else

echo "There was a problem downloading ${WGET_FILENAME} from the official download link, " >> $SYNOPKG_TEMP_LOGFILE

echo "which was \"${WGET_URL}\" " >> $SYNOPKG_TEMP_LOGFILE

echo "Alternatively, you may download this file manually and place it in the 'public' shared folder. " >> $SYNOPKG_TEMP_LOGFILE

exit 1

fi

fi

done

exit 0

}

postinst ()

{

if [ "${WIZARD_JRE_CP}" == "true" ]; then

#extract Java (Web browsers love to interfere with .tar.gz files)

cd ${PUBLIC_FOLDER}

if [ -f ${JAVA_BINARY}.tar.gz ]; then

#Firefox seems to be the only browser that leaves it alone

tar xzf ${JAVA_BINARY}.tar.gz

elif [ -f ${JAVA_BINARY}.gz ]; then

#Chrome

tar xzf ${JAVA_BINARY}.gz

elif [ -f ${JAVA_BINARY}.tar ]; then

#Safari

tar xf ${JAVA_BINARY}.tar

elif [ -f ${JAVA_BINARY}.tar.tar ]; then

#Internet Explorer

tar xzf ${JAVA_BINARY}.tar.tar

fi

mv ${EXTRACTED_FOLDER} ${SYNOPKG_PKGDEST}/jre-syno

JRE_PATH="`find ${OPTDIR}/jre-syno/ -name jre`"

[ -z ${JRE_PATH} ] && JRE_PATH=${OPTDIR}/jre-syno

#change owner of folder tree

chown -R root:root ${SYNOPKG_PKGDEST}

fi

#extract CPU-specific additional binaries

mkdir ${SYNOPKG_PKGDEST}/bin

cd ${SYNOPKG_PKGDEST}/bin

tar xJf ${TEMP_FOLDER}/${NATIVE_BINS_FILE} && rm ${TEMP_FOLDER}/${NATIVE_BINS_FILE}

[ "${OLD_JNA_NEEDED}" == "true" ] && tar xJf ${TEMP_FOLDER}/${OLD_JNA_FILE} && rm ${TEMP_FOLDER}/${OLD_JNA_FILE}

#extract main archive

cd ${TEMP_FOLDER}

tar xzf ${TEMP_FOLDER}/${DOWNLOAD_FILE} && rm ${TEMP_FOLDER}/${DOWNLOAD_FILE}

#extract cpio archive

cd ${SYNOPKG_PKGDEST}

cat "${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}"/${CPI_FILE} | gzip -d -c - | ${SYNOPKG_PKGDEST}/bin/cpio -i --no-preserve-owner

echo "#uncomment to expand Java max heap size beyond prescribed value (will survive upgrades)" > ${SYNOPKG_PKGDEST}/syno_package.vars

echo "#you probably only want more than the recommended 1024M if you're backing up extremely large volumes of files" >> ${SYNOPKG_PKGDEST}/syno_package.vars

echo "#USR_MAX_HEAP=1024M" >> ${SYNOPKG_PKGDEST}/syno_package.vars

echo >> ${SYNOPKG_PKGDEST}/syno_package.vars

cp ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/scripts/CrashPlanEngine ${OPTDIR}/bin

cp ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/scripts/run.conf ${OPTDIR}/bin

mkdir -p ${MANIFEST_FOLDER}/backupArchives

#save install variables which Crashplan expects its own installer script to create

echo TARGETDIR=${SYNOPKG_PKGDEST} > ${VARS_FILE}

echo BINSDIR=/bin >> ${VARS_FILE}

echo MANIFESTDIR=${MANIFEST_FOLDER}/backupArchives >> ${VARS_FILE}

#leave these ones out which should help upgrades from Code42 to work (based on examining an upgrade script)

#echo INITDIR=/etc/init.d >> ${VARS_FILE}

#echo RUNLVLDIR=/usr/syno/etc/rc.d >> ${VARS_FILE}

echo INSTALLDATE=`date +%Y%m%d` >> ${VARS_FILE}

[ "${WIZARD_JRE_CP}" == "true" ] && echo JAVACOMMON=${JRE_PATH}/bin/java >> ${VARS_FILE}

[ "${WIZARD_JRE_SYS}" == "true" ] && echo JAVACOMMON=\${JAVA_HOME}/bin/java >> ${VARS_FILE}

cat ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}/install.defaults >> ${VARS_FILE}

#remove temp files

rm -r ${TEMP_FOLDER}/${CP_EXTRACTED_FOLDER}

#add firewall config

/usr/syno/bin/servicetool --install-configure-file --package /var/packages/${SYNOPKG_PKGNAME}/scripts/${SYNOPKG_PKGNAME}.sc > /dev/null

#amend CrashPlanPROe client version

[ "${SYNOPKG_PKGNAME}" == "CrashPlanPROe" ] && sed -i -r "s/^version=\".*(-.*$)/version=\"${CPPROE_VER}\1/" /var/packages/${SYNOPKG_PKGNAME}/INFO

#are we transitioning an existing CrashPlan account to CrashPlan For Small Business?

if [ "${SYNOPKG_PKGNAME}" == "CrashPlanPRO" ]; then

if [ -e /var/packages/CrashPlan/scripts/start-stop-status ]; then

/var/packages/CrashPlan/scripts/start-stop-status stop

cp /var/lib/crashplan/.identity ${PUBLIC_FOLDER}/crashplan-identity.bak

cp -R /var/packages/CrashPlan/target/conf/ ${OPTDIR}/

fi

fi

exit 0

}

preuninst ()

{

`dirname $0`/stop-start-status stop

exit 0

}

postuninst ()

{

if [ -f ${SYNOPKG_PKGDEST}/syno_package.vars ]; then

source ${SYNOPKG_PKGDEST}/syno_package.vars

fi

[ -e ${OPTDIR}/lib/libffi.so.5 ] && rm ${OPTDIR}/lib/libffi.so.5

#delete symlink if it no longer resolves - PowerPC only

if [ ! -e /lib/libffi.so.5 ]; then

[ -L /lib/libffi.so.5 ] && rm /lib/libffi.so.5

fi

#remove firewall config

if [ "${SYNOPKG_PKG_STATUS}" == "UNINSTALL" ]; then

/usr/syno/bin/servicetool --remove-configure-file --package ${SYNOPKG_PKGNAME}.sc > /dev/null

fi

exit 0

}

preupgrade ()

{

`dirname $0`/stop-start-status stop

pre_checks

#if identity exists back up config

if [ -f /var/lib/crashplan/.identity ]; then

mkdir -p ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf

for FILE_TO_MIGRATE in ${UPGRADE_FILES}; do

if [ -f ${OPTDIR}/${FILE_TO_MIGRATE} ]; then

cp ${OPTDIR}/${FILE_TO_MIGRATE} ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE}

fi

done

for FOLDER_TO_MIGRATE in ${UPGRADE_FOLDERS}; do

if [ -d ${OPTDIR}/${FOLDER_TO_MIGRATE} ]; then

mv ${OPTDIR}/${FOLDER_TO_MIGRATE} ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig

fi

done

fi

exit 0

}

postupgrade ()

{

#use the migrated identity and config data from the previous version

if [ -f ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf/my.service.xml ]; then

for FILE_TO_MIGRATE in ${UPGRADE_FILES}; do

if [ -f ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE} ]; then

mv ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FILE_TO_MIGRATE} ${OPTDIR}/${FILE_TO_MIGRATE}

fi

done

for FOLDER_TO_MIGRATE in ${UPGRADE_FOLDERS}; do

if [ -d ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FOLDER_TO_MIGRATE} ]; then

mv ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/${FOLDER_TO_MIGRATE} ${OPTDIR}

fi

done

rmdir ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig/conf

rmdir ${SYNOPKG_PKGDEST}/../${SYNOPKG_PKGNAME}_data_mig

#make CrashPlan log entry

TIMESTAMP="`date "+%D %I:%M%p"`"

echo "I ${TIMESTAMP} Synology Package Center updated ${SYNOPKG_PKGNAME} to version ${SYNOPKG_PKGVER}" >> ${LOG_FILE}

fi

exit 0

}

start-stop-status.sh

#!/bin/sh

#--------CRASHPLAN start-stop-status script

#--------package maintained at pcloadletter.co.uk

TEMP_FOLDER="`find / -maxdepth 2 -path '/volume?/@tmp' | head -n 1`"

MANIFEST_FOLDER="/`echo $TEMP_FOLDER | cut -f2 -d'/'`/crashplan"

ENGINE_CFG="run.conf"

PKG_FOLDER="`dirname $0 | cut -f1-4 -d'/'`"

DNAME="`dirname $0 | cut -f4 -d'/'`"

OPTDIR="${PKG_FOLDER}/target"

PID_FILE="${OPTDIR}/${DNAME}.pid"

DLOG="${OPTDIR}/log/history.log.0"

CFG_PARAM="SRV_JAVA_OPTS"

JAVA_MIN_HEAP=`grep "^${CFG_PARAM}=" "${OPTDIR}/bin/${ENGINE_CFG}" | sed -r "s/^.*-Xms([0-9]+)[Mm] .*$/\1/"`

SYNO_CPU_ARCH="`uname -m`"

TIMESTAMP="`date "+%D %I:%M%p"`"

FULL_CP="${OPTDIR}/lib/com.backup42.desktop.jar:${OPTDIR}/lang"

source ${OPTDIR}/install.vars

source /etc/profile

source /root/.profile

start_daemon ()

{

#check persistent variables from syno_package.vars

USR_MAX_HEAP=0

if [ -f ${OPTDIR}/syno_package.vars ]; then

source ${OPTDIR}/syno_package.vars

fi

USR_MAX_HEAP=`echo $USR_MAX_HEAP | sed -e "s/[mM]//"`

#do we need to restore the identity file - has a DSM upgrade scrubbed /var/lib/crashplan?

if [ ! -e /var/lib/crashplan ]; then

mkdir /var/lib/crashplan

[ -e ${OPTDIR}/conf/var-backup/.identity ] && cp ${OPTDIR}/conf/var-backup/.identity /var/lib/crashplan/

fi

#fix up some of the binary paths and fix some command syntax for busybox

#moved this to start-stop-status.sh from installer.sh because Code42 push updates and these

#new scripts will need this treatment too

find ${OPTDIR}/ -name "*.sh" | while IFS="" read -r FILE_TO_EDIT; do

if [ -e ${FILE_TO_EDIT} ]; then

#this list of substitutions will probably need expanding as new CrashPlan updates are released

sed -i "s%^#!/bin/bash%#!$/bin/sh%" "${FILE_TO_EDIT}"

sed -i -r "s%(^\s*)(/bin/cpio |cpio ) %\1/${OPTDIR}/bin/cpio %" "${FILE_TO_EDIT}"

sed -i -r "s%(^\s*)(/bin/ps|ps) [^w][^\|]*\|%\1/bin/ps w \|%" "${FILE_TO_EDIT}"

sed -i -r "s%\`ps [^w][^\|]*\|%\`ps w \|%" "${FILE_TO_EDIT}"

sed -i -r "s%^ps [^w][^\|]*\|%ps w \|%" "${FILE_TO_EDIT}"

sed -i "s/rm -fv/rm -f/" "${FILE_TO_EDIT}"

sed -i "s/mv -fv/mv -f/" "${FILE_TO_EDIT}"

fi

done

#use this daemon init script rather than the unreliable Code42 stock one which greps the ps output

sed -i "s%^ENGINE_SCRIPT=.*$%ENGINE_SCRIPT=$0%" ${OPTDIR}/bin/restartLinux.sh

#any downloaded upgrade script will usually have failed despite the above changes

#so ignore the script and explicitly extract the new java code using the chrisnelson.ca method

#thanks to Jeff Bingham for tweaks

UPGRADE_JAR=`find ${OPTDIR}/upgrade -maxdepth 1 -name "*.jar" | tail -1`

if [ -n "${UPGRADE_JAR}" ]; then

rm ${OPTDIR}/*.pid > /dev/null

#make CrashPlan log entry

echo "I ${TIMESTAMP} Synology extracting upgrade from ${UPGRADE_JAR}" >> ${DLOG}

UPGRADE_VER=`echo ${SCRIPT_HOME} | sed -r "s/^.*\/([0-9_]+)\.[0-9]+/\1/"`

#DSM 6.0 no longer includes unzip, use 7z instead

unzip -o ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "*.jar" -d ${OPTDIR}/lib/ || 7z e -y ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "*.jar" -o${OPTDIR}/lib/ > /dev/null

unzip -o ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "lang/*" -d ${OPTDIR} || 7z e -y ${OPTDIR}/upgrade/${UPGRADE_VER}.jar "lang/*" -o${OPTDIR} > /dev/null

mv ${UPGRADE_JAR} ${TEMP_FOLDER}/ > /dev/null

exec $0

fi

#updates may also overwrite our native binaries

[ -e ${OPTDIR}/bin/libffi.so.5 ] && cp -f ${OPTDIR}/bin/libffi.so.5 ${OPTDIR}/lib/

[ -e ${OPTDIR}/bin/libjtux.so ] && cp -f ${OPTDIR}/bin/libjtux.so ${OPTDIR}/

[ -e ${OPTDIR}/bin/jna-3.2.5.jar ] && cp -f ${OPTDIR}/bin/jna-3.2.5.jar ${OPTDIR}/lib/

if [ -e ${OPTDIR}/bin/jna.jar ] && [ -e ${OPTDIR}/lib/jna.jar ]; then

cp -f ${OPTDIR}/bin/jna.jar ${OPTDIR}/lib/

fi

#create or repair libffi.so.5 symlink if a DSM upgrade has removed it - PowerPC only

if [ -e ${OPTDIR}/lib/libffi.so.5 ]; then

if [ ! -e /lib/libffi.so.5 ]; then

#if it doesn't exist, but is still a link then it's a broken link and should be deleted first

[ -L /lib/libffi.so.5 ] && rm /lib/libffi.so.5

ln -s ${OPTDIR}/lib/libffi.so.5 /lib/libffi.so.5

fi

fi

#set appropriate Java max heap size

RAM=$((`free | grep Mem: | sed -e "s/^ *Mem: *\([0-9]*\).*$/\1/"`/1024))

if [ $RAM -le 128 ]; then

JAVA_MAX_HEAP=80

elif [ $RAM -le 256 ]; then

JAVA_MAX_HEAP=192

elif [ $RAM -le 512 ]; then

JAVA_MAX_HEAP=384

elif [ $RAM -le 1024 ]; then

JAVA_MAX_HEAP=512

elif [ $RAM -gt 1024 ]; then

JAVA_MAX_HEAP=1024

fi

if [ $USR_MAX_HEAP -gt $JAVA_MAX_HEAP ]; then

JAVA_MAX_HEAP=${USR_MAX_HEAP}

fi

if [ $JAVA_MAX_HEAP -lt $JAVA_MIN_HEAP ]; then

#can't have a max heap lower than min heap (ARM low RAM systems)

$JAVA_MAX_HEAP=$JAVA_MIN_HEAP

fi

sed -i -r "s/(^${CFG_PARAM}=.*) -Xmx[0-9]+[mM] (.*$)/\1 -Xmx${JAVA_MAX_HEAP}m \2/" "${OPTDIR}/bin/${ENGINE_CFG}"

#disable the use of the x86-optimized external Fast MD5 library if running on ARM and PPC CPUs

#seems to be the default behaviour now but that may change again

[ "${SYNO_CPU_ARCH}" == "x86_64" ] && SYNO_CPU_ARCH="i686"

if [ "${SYNO_CPU_ARCH}" != "i686" ]; then

grep "^${CFG_PARAM}=.*c42\.native\.md5\.enabled" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s/(^${CFG_PARAM}=\".*)\"$/\1 -Dc42.native.md5.enabled=false\"/" "${OPTDIR}/bin/${ENGINE_CFG}"

fi

#move the Java temp directory from the default of /tmp

grep "^${CFG_PARAM}=.*Djava\.io\.tmpdir" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s%(^${CFG_PARAM}=\".*)\"$%\1 -Djava.io.tmpdir=${TEMP_FOLDER}\"%" "${OPTDIR}/bin/${ENGINE_CFG}"

#now edit the XML config file, which only exists after first run

if [ -f ${OPTDIR}/conf/my.service.xml ]; then

#allow direct connections from CrashPlan Desktop client on remote systems

#you must edit the value of serviceHost in conf/ui.properties on the client you connect with

#users report that this value is sometimes reset so now it's set every service startup

sed -i "s/<serviceHost>127\.0\.0\.1<\/serviceHost>/<serviceHost>0\.0\.0\.0<\/serviceHost>/" "${OPTDIR}/conf/my.service.xml"

#default changed in CrashPlan 4.3

sed -i "s/<serviceHost>localhost<\/serviceHost>/<serviceHost>0\.0\.0\.0<\/serviceHost>/" "${OPTDIR}/conf/my.service.xml"

#since CrashPlan 4.4 another config file to allow remote console connections

sed -i "s/127\.0\.0\.1/0\.0\.0\.0/" /var/lib/crashplan/.ui_info

#this change is made only once in case you want to customize the friends' backup location

if [ "${MANIFEST_PATH_SET}" != "True" ]; then

#keep friends' backup data outside the application folder to make accidental deletion less likely

sed -i "s%<manifestPath>.*</manifestPath>%<manifestPath>${MANIFEST_FOLDER}/backupArchives/</manifestPath>%" "${OPTDIR}/conf/my.service.xml"

echo "MANIFEST_PATH_SET=True" >> ${OPTDIR}/syno_package.vars

fi

#since CrashPlan version 3.5.3 the value javaMemoryHeapMax also needs setting to match that used in bin/run.conf

sed -i -r "s%(<javaMemoryHeapMax>)[0-9]+[mM](</javaMemoryHeapMax>)%\1${JAVA_MAX_HEAP}m\2%" "${OPTDIR}/conf/my.service.xml"

#make sure CrashPlan is not binding to the IPv6 stack

grep "\-Djava\.net\.preferIPv4Stack=true" "${OPTDIR}/bin/${ENGINE_CFG}" > /dev/null \

|| sed -i -r "s/(^${CFG_PARAM}=\".*)\"$/\1 -Djava.net.preferIPv4Stack=true\"/" "${OPTDIR}/bin/${ENGINE_CFG}"

else

echo "Check the package log to ensure the package has started successfully, then stop and restart the package to allow desktop client connections." > "${SYNOPKG_TEMP_LOGFILE}"

fi

#increase the system-wide maximum number of open files from Synology default of 24466

[ `cat /proc/sys/fs/file-max` -lt 65536 ] && echo "65536" > /proc/sys/fs/file-max

#raise the maximum open file count from the Synology default of 1024 - thanks Casper K. for figuring this out

#http://support.code42.com/Administrator/3.6_And_4.0/Troubleshooting/Too_Many_Open_Files

ulimit -n 65536

#ensure that Code 42 have not amended install.vars to force the use of their own (Intel) JRE

if [ -e ${OPTDIR}/jre-syno ]; then

JRE_PATH="`find ${OPTDIR}/jre-syno/ -name jre`"

[ -z ${JRE_PATH} ] && JRE_PATH=${OPTDIR}/jre-syno

sed -i -r "s|^(JAVACOMMON=).*$|\1\${JRE_PATH}/bin/java|" ${OPTDIR}/install.vars

#if missing, set timezone and locale for dedicated JRE

if [ -z ${TZ} ]; then

SYNO_TZ=`cat /etc/synoinfo.conf | grep timezone | cut -f2 -d'"'`

#fix for DST time in DSM 5.2 thanks to MinimServer Syno package author

[ -e /usr/share/zoneinfo/Timezone/synotztable.json ] \

&& SYNO_TZ=`jq ".${SYNO_TZ} | .nameInTZDB" /usr/share/zoneinfo/Timezone/synotztable.json | sed -e "s/\"//g"` \

|| SYNO_TZ=`grep "^${SYNO_TZ}" /usr/share/zoneinfo/Timezone/tzname | sed -e "s/^.*= //"`

export TZ=${SYNO_TZ}

fi

[ -z ${LANG} ] && export LANG=en_US.utf8

export CLASSPATH=.:${OPTDIR}/jre-syno/lib

else

sed -i -r "s|^(JAVACOMMON=).*$|\1\${JAVA_HOME}/bin/java|" ${OPTDIR}/install.vars

fi

source ${OPTDIR}/bin/run.conf

source ${OPTDIR}/install.vars

cd ${OPTDIR}

$JAVACOMMON $SRV_JAVA_OPTS -classpath $FULL_CP com.backup42.service.CPService > ${OPTDIR}/log/engine_output.log 2> ${OPTDIR}/log/engine_error.log &

if [ $! -gt 0 ]; then

echo $! > $PID_FILE

renice 19 $! > /dev/null

if [ -z "${SYNOPKG_PKGDEST}" ]; then

#script was manually invoked, need this to show status change in Package Center

[ -e ${PKG_FOLDER}/enabled ] || touch ${PKG_FOLDER}/enabled

fi

else

echo "${DNAME} failed to start, check ${OPTDIR}/log/engine_error.log" > "${SYNOPKG_TEMP_LOGFILE}"

echo "${DNAME} failed to start, check ${OPTDIR}/log/engine_error.log" >&2

exit 1

fi

}

stop_daemon ()

{

echo "I ${TIMESTAMP} Stopping ${DNAME}" >> ${DLOG}

kill `cat ${PID_FILE}`

wait_for_status 1 20 || kill -9 `cat ${PID_FILE}`

rm -f ${PID_FILE}

if [ -z ${SYNOPKG_PKGDEST} ]; then

#script was manually invoked, need this to show status change in Package Center

[ -e ${PKG_FOLDER}/enabled ] && rm ${PKG_FOLDER}/enabled

fi

#backup identity file in case DSM upgrade removes it

[ -e ${OPTDIR}/conf/var-backup ] || mkdir ${OPTDIR}/conf/var-backup

cp /var/lib/crashplan/.identity ${OPTDIR}/conf/var-backup/

}

daemon_status ()

{

if [ -f ${PID_FILE} ] && kill -0 `cat ${PID_FILE}` > /dev/null 2>&1; then

return

fi

rm -f ${PID_FILE}

return 1

}

wait_for_status ()

{

counter=$2

while [ ${counter} -gt 0 ]; do

daemon_status

[ $? -eq $1 ] && return

let counter=counter-1

sleep 1

done

return 1

}

case $1 in

start)

if daemon_status; then

echo ${DNAME} is already running with PID `cat ${PID_FILE}`

exit 0

else

echo Starting ${DNAME} ...

start_daemon

exit $?

fi

;;

stop)

if daemon_status; then

echo Stopping ${DNAME} ...

stop_daemon

exit $?

else

echo ${DNAME} is not running

exit 0

fi

;;

restart)

stop_daemon

start_daemon

exit $?

;;

status)

if daemon_status; then

echo ${DNAME} is running with PID `cat ${PID_FILE}`

exit 0

else

echo ${DNAME} is not running

exit 1

fi

;;

log)

echo "${DLOG}"

exit 0

;;

*)

echo "Usage: $0 {start|stop|status|restart}" >&2

exit 1

;;

esac

install_uifile & upgrade_uifile

[

{

"step_title": "Client Version Selection",

"items": [

{

"type": "singleselect",

"desc": "Please select the CrashPlanPROe client version that is appropriate for your backup destination server:",

"subitems": [

{

"key": "WIZARD_VER_483",

"desc": "4.8.3",

"defaultValue": true

}, {

"key": "WIZARD_VER_480",

"desc": "4.8.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_470",

"desc": "4.7.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_460",

"desc": "4.6.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_452",

"desc": "4.5.2",

"defaultValue": false

},

{

"key": "WIZARD_VER_450",

"desc": "4.5.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_441",

"desc": "4.4.1",

"defaultValue": false

},

{

"key": "WIZARD_VER_430",

"desc": "4.3.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_420",

"desc": "4.2.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_370",

"desc": "3.7.0",

"defaultValue": false

},

{

"key": "WIZARD_VER_364",

"desc": "3.6.4",

"defaultValue": false

},

{

"key": "WIZARD_VER_363",

"desc": "3.6.3",

"defaultValue": false

},

{

"key": "WIZARD_VER_3614",

"desc": "3.6.1.4",

"defaultValue": false

},

{

"key": "WIZARD_VER_353",

"desc": "3.5.3",

"defaultValue": false

},

{

"key": "WIZARD_VER_341",

"desc": "3.4.1",

"defaultValue": false

},

{

"key": "WIZARD_VER_33",

"desc": "3.3",

"defaultValue": false

}

]

}

]

},

{

"step_title": "Java Runtime Environment Selection",

"items": [

{

"type": "singleselect",

"desc": "Please select the Java version which you would like CrashPlan to use:",

"subitems": [

{

"key": "WIZARD_JRE_SYS",

"desc": "Default system Java version",

"defaultValue": false

},

{

"key": "WIZARD_JRE_CP",

"desc": "Dedicated installation of Java 8",

"defaultValue": true

}

]

}

]

}

]

Changelog:

- 0047 30/Oct/17 – Updated dedicated Java version to 8 update 151, added support for additional Intel CPUs in x18 Synology products.

- 0046 26/Aug/17 – Updated to CrashPlan PRO 4.9, added support for migration from CrashPlan For Home to CrashPlan For Small Business (CrashPlan PRO). Please read the Migration section on this page for instructions.

- 0045 02/Aug/17 – Updated to CrashPlan 4.8.3, updated dedicated Java version to 8 update 144

- 0044 21/Jan/17 – Updated dedicated Java version to 8 update 121

- 0043 07/Jan/17 – Updated dedicated Java version to 8 update 111, added support for Intel Broadwell and Grantley CPUs

- 0042 03/Oct/16 – Updated to CrashPlan 4.8.0, Java 8 is now required, added optional dedicated Java 8 Runtime instead of the default system one including 64bit Java support on 64 bit Intel CPUs to permit memory allocation larger than 4GB. Support for non-Intel platforms withdrawn owing to Code42’s reliance on proprietary native code library libc42archive.so

- 0041 20/Jul/16 – Improved auto-upgrade compatibility (hopefully), added option to have CrashPlan use a dedicated Java 7 Runtime instead of the default system one, including 64bit Java support on 64 bit Intel CPUs to permit memory allocation larger than 4GB

- 0040 25/May/16 – Added cpio to the path in the running context of start-stop-status.sh

- 0039 25/May/16 – Updated to CrashPlan 4.7.0, at each launch forced the use of the system JRE over the CrashPlan bundled Intel one, added Maven build of JNA 4.1.0 for ARMv7 systems consistent with the version bundled with CrashPlan

- 0038 27/Apr/16 – Updated to CrashPlan 4.6.0, and improved support for Code 42 pushed updates

- 0037 21/Jan/16 – Updated to CrashPlan 4.5.2

- 0036 14/Dec/15 – Updated to CrashPlan 4.5.0, separate firewall definitions for management client and for friends backup, added support for DS716+ and DS216play

- 0035 06/Nov/15 – Fixed the update to 4.4.1_59, new installs now listen for remote connections after second startup (was broken from 4.4), updated client install documentation with more file locations and added a link to a new Code42 support doc

EITHER completely remove and reinstall the package (which will require a rescan of the entire backup set) OR alternatively please delete all except for one of the failed upgrade numbered subfolders in /var/packages/CrashPlan/target/upgrade before upgrading. There will be one folder for each time CrashPlan tried and failed to start since Code42 pushed the update - 0034 04/Oct/15 – Updated to CrashPlan 4.4.1, bundled newer JNA native libraries to match those from Code42, PLEASE READ UPDATED BLOG POST INSTRUCTIONS FOR CLIENT INSTALL this version introduced yet another requirement for the client

- 0033 12/Aug/15 – Fixed version 0032 client connection issue for fresh installs

- 0032 12/Jul/15 – Updated to CrashPlan 4.3, PLEASE READ UPDATED BLOG POST INSTRUCTIONS FOR CLIENT INSTALL this version introduced an extra requirement, changed update repair to use the chrisnelson.ca method, forced CrashPlan to prefer IPv4 over IPv6 bindings, removed some legacy version migration scripting, updated main blog post documentation

- 0031 20/May/15 – Updated to CrashPlan 4.2, cross compiled a newer cpio binary for some architectures which were segfaulting while unpacking main CrashPlan archive, added port 4242 to the firewall definition (friend backups), package is now signed with repository private key

- 0030 16/Feb/15 – Fixed show-stopping issue with version 0029 for systems with more than one volume

- 0029 21/Jan/15 – Updated to CrashPlan version 3.7.0, improved detection of temp folder (prevent use of /var/@tmp), added support for Annapurna Alpine AL514 CPU (armhf) in DS2015xs, added support for Marvell Armada 375 CPU (armhf) in DS215j, abandoned practical efforts to try to support Code42’s upgrade scripts, abandoned inotify support (realtime backup) on PowerPC after many failed attempts with self-built and pre-built jtux and jna libraries, back-merged older libffi support for old PowerPC binaries after it was removed in 0028 re-write

- 0028 22/Oct/14 – Substantial re-write:

Updated to CrashPlan version 3.6.4

DSM 5.0 or newer is now required

libjnidispatch.so taken from Debian JNA 3.2.7 package with dependency on newer libffi.so.6 (included in DSM 5.0)

jna-3.2.5.jar emptied of irrelevant CPU architecture libs to reduce size

Increased default max heap size from 512MB to 1GB on systems with more than 1GB RAM

Intel CPUs no longer need the awkward glibc version-faking shim to enable inotify support (for real-time backup)

Switched to using root account – no more adding account permissions for backup, package upgrades will no longer break this

DSM Firewall application definition added

Tested with DSM Task Scheduler to allow backups between certain times of day only, saving RAM when not in use

Daemon init script now uses a proper PID file instead of Code42’s unreliable method of using grep on the output of ps

Daemon init script can be run from the command line

Removal of bash binary dependency now Code42’s CrashPlanEngine script is no longer used

Removal of nice binary dependency, using BusyBox equivalent renice

Unified ARMv5 and ARMv7 external binary package (armle)

Added support for Mindspeed Comcerto 2000 CPU (comcerto2k – armhf) in DS414j

Added support for Intel Atom C2538 (avoton) CPU in DS415+

Added support to choose which version of CrashPlan PROe client to download, since some servers may still require legacy versions

Switched to .tar.xz compression for native binaries to reduce web hosting footprint - 0027 20/Mar/14 – Fixed open file handle limit for very large backup sets (ulimit fix)

- 0026 16/Feb/14 – Updated all CrashPlan clients to version 3.6.3, improved handling of Java temp files

- 0025 30/Jan/14 – glibc version shim no longer used on Intel Synology models running DSM 5.0

- 0024 30/Jan/14 – Updated to CrashPlan PROe 3.6.1.4 and added support for PowerPC 2010 Synology models running DSM 5.0

- 0023 30/Jan/14 – Added support for Intel Atom Evansport and Armada XP CPUs in new DSx14 products

- 0022 10/Jun/13 – Updated all CrashPlan client versions to 3.5.3, compiled native binary dependencies to add support for Armada 370 CPU (DS213j), start-stop-status.sh now updates the new javaMemoryHeapMax value in my.service.xml to the value defined in syno_package.vars

- 0021 01/Mar/13 – Updated CrashPlan to version 3.5.2

- 0020 21/Jan/13 – Fixes for DSM 4.2

- 018 Updated CrashPlan PRO to version 3.4.1

- 017 Updated CrashPlan and CrashPlan PROe to version 3.4.1, and improved in-app update handling

- 016 Added support for Freescale QorIQ CPUs in some x13 series Synology models, and installer script now downloads native binaries separately to reduce repo hosting bandwidth, PowerQUICC PowerPC processors in previous Synology generations with older glibc versions are not supported

- 015 Added support for easy scheduling via cron – see updated Notes section

- 014 DSM 4.1 user profile permissions fix

- 013 implemented update handling for future automatic updates from Code 42, and incremented CrashPlanPRO client to release version 3.2.1

- 012 incremented CrashPlanPROe client to release version 3.3

- 011 minor fix to allow a wildcard on the cpio archive name inside the main installer package (to fix CP PROe client since Code 42 Software had amended the cpio file version to 3.2.1.2)

- 010 minor bug fix relating to daemon home directory path

- 009 rewrote the scripts to be even easier to maintain and unified as much as possible with my imminent CrashPlan PROe server package, fixed a timezone bug (tightened regex matching), moved the script-amending logic from installer.sh to start-stop-status.sh with it now applying to all .sh scripts each startup so perhaps updates from Code42 might work in future, if wget fails to fetch the installer from Code42 the installer will look for the file in the public shared folder

- 008 merged the 14 package scripts each (7 for ARM, 7 for Intel) for CP, CP PRO, & CP PROe – 42 scripts in total – down to just two! ARM & Intel are now supported by the same package, Intel synos now have working inotify support (Real-Time Backup) thanks to rwojo’s shim to pass the glibc version check, upgrade process now retains login, cache and log data (no more re-scanning), users can specify a persistent larger max heap size for very large backup sets

- 007 fixed a bug that broke CrashPlan if the Java folder moved (if you changed version)

- 006 installation now fails without User Home service enabled, fixed Daylight Saving Time support, automated replacing the ARM libffi.so symlink which is destroyed by DSM upgrades, stopped assuming the primary storage volume is /volume1, reset ownership on /var/lib/crashplan and the Friends backup location after installs and upgrades

- 005 added warning to restart daemon after 1st run, and improved upgrade process again

- 004 updated to CrashPlan 3.2.1 and improved package upgrade process, forced binding to 0.0.0.0 each startup

- 003 fixed ownership of /volume1/crashplan folder

- 002 updated to CrashPlan 3.2

- 001 30/Jan/12 – intial public release

New info, on my NAS @ work version 1435813200480 is installed this morning.

In Package Center it shows that CrashPlan is NOT running.

However it’s stil running, backup every hour and can connect with the client.

I just got the new CP version updated on my DS216. It took two restart of the CP app on the NAS before the update was properly applied. The update also pulled down it’s own Java version (1.8U72).

Only odd thing was that the upgrade caused a block sync.

All in all, I’m back online at 100%.

Good idea TD to not have CP update automatically by executing the commands “mv upgrade upgrade.tmp” and then “touch upgrade”

Hopefully it will continue to run for months from now on.

Indeed! I now have a message at the top of the client saying it failed to upgrade and will try again in one hour!! Hopefully this means it will run until the Crashplan back end no longer supports the 4.8 version.

I’m trying to reinstall Crashplan from patter’s repo. Unfortunately I uninstalled the version that I had. When I tried to re-install it my 213j couldn’t find package. I removed and added back the repository to no avail, the package could not be found. Has something changed in the latest version that prevents it from being installed on a 213j? The odd thing was that prior to uninstalling the package I saw an upgrade available that I attempted to install. It was only when this failed that I uninstalled the old (non-functioning) installation.

Like others here, I’m getting tired of fixing crashplan repeatedly. So what’s a good NAS/Server + cloud back up combination for us uneducated home users, particularly those who don’t really want to spend a lot of time administrating. Is buying a cheap Dell server or equivalent a better setup than using a headless NAS like Synology?

Was hit by update1435813200480 this morning, and it stopped.

Did 2 manual restarts and it is now up and running (synchronising). I noticed it had reset the syno_package.vars file so I had to reinsert my usr_max_heap setting. Apart from that it looks like everything went OK.

DS412+, 2GB RAM, DSM 6.0.2-8451 Update 1

Testing Amazon Drive ($60/year) with Hyperbackup seems to work fine so far.. It has been running stable for 3 weeks now (backup size approx 400GB). I’m using 3 ‘profiles’ with different schedules and with/without versioning enabled.

– Support for versioning

– No restore or file access outside Hyperbackup restore

(maybe via Hyperbackup Explorer if you mount your Amazon Drive as a folder?)

– Restore only to original location

– Restore not per file, per folder only

– Restores keep original file dates (incl. folders)

– Supports encryption

– NO mobile apps to access the data, as the data on the Amazon Drive is basically an encrypted backup.

– NO web interface to access your backed up files (except for Hyperbackup restore)

You can restore individual files, and you can restore to different locations. See my previous comments.

I am thinking about Cloud Sync + Hyperbackup. I started with CloudSync and have about 60% of my data there and it has the ability to restore per file and mobile access and a nice web interface (not using the additional synology layer of encryption). Of course, there is no root folder backup and versioning but it serves my immediate need (offsite copy of my files). And it is nice to go a supported route than trying to fix crashplan every time an update rolls out. Since I have an ARM processor, crashplan is no longer an option so I am pretty sure I am going to stay with the $70/yr amazon option.

Update for my NAS completed.

Can start Crashplan but within the minute it stops again :-(

Check your .ui_info file on your server, they changed TCP port and ID yet again, so you will have to align your client end .ui_info file.

Yep, I have the same problem the 1435813200480_233 update has killed CrashPlan again as of 10/27/2016. I saw the post above and I equally had to go back in and fix the USR_MAX_HEAP in syno_package.vars but no matter how many times I tried to stop and restart the package from the Synology Package Center it still won’t start and the last entry in the history log is:

10/25/16 01:30PM Synology extracting upgrade from /var/packages/CrashPlan/target/upgrade/1435813200480_323.jar

It seems that this is related to Synology downloading jre-linux-i586-1.8.0_72.tgz which is sitting in the CrashPlan root directory and in the install.vars script it’s looking for

JAVACOMMON=${JAVA_HOME}/bin/java

where the original install.vars used to have

JAVACOMMON=/volume1/@appstore/CrashPlan/jre/bin/java

the last entry in the engine_error.log

/var/packages/CrashPlan/scripts/start-stop-status.sh: line 190: /bin/java: No such file or directory

At first I just tried to use the original line and point to the existing java binary but that did not work as after trying to restart the package it replaced the JAVACOMMON line in the install.vars to the new version again.

So I figured to try and create a symlink in the CrashPlan/bin directory called java pointing to the current java binary but that didn’t work either. The package just doesn’t want to start with no further information in the logs than what’s above.

I didn’t want to experiment with trying to untar/gzip the new jre-linux-i586-1.8.0_72.tgz file for fear of doing it wrong and having a massive load of java garbage in my directory structure.

Patters – can you make an update to the package so we can get through ‘yet another synology update’ that we have all gotten used to at this point.

Is there a reason that the latest Crashplan package is not visible as an install on a 213j? I’ve got the patters repo configured but no Crashplan package is listed under community section in package center (after refreshing too).

Same here. I was going to try reinstalling, and it’s gone :(

See (many) previous comments – due to changes in a proprietary module, CrashPlan will no longer function on non-Intel CPUs, so Patters has withdrawn the package. Earlier versions can still be downloaded manually – again, see previous comments.

Since 23h this day, crasplan stop working again after this message :

Synology extracting upgrade from /var/package/CrashPlan/target/upgrade/1435813200480_323.jar

What is the problem ?

Ok, the update don’t work. I’ve done it manually :

sudo cp /volume1/public/1435813200460_382/*.jar /volume1/@appstore/CrashPlan/lib

sudo cp /volume1/public/1435813200460_382/lang/*.* /volume1/@appstore/CrashPlan/lang/

Well here we go again! Effing Cr@pPlan at its best again. I have now done the chmod 444 on the update directory so up your Cr@pPlan! I am running HyperBackup to Amazon and will switch when my subscription to this Cr@pPlan runs out in 7 months time. Totally sick of it.

Does anyone has a reasonable explanation as to why on earth are they so any supporting NAS? Is it their Java client? I really don’t get it…

I got 4.8 Running, Java8 Running. I edited my ui files with the keys, servicehost etc. Some reason I can’t connect to it at all from the client. Anybody else experience this? Everything seems to be running just not having luck with the client.

This seems to be my problem too. 4.8.0 now seems to run fine. starts / stops fine … yet none of the previous clients connect.

ok – this might be it.

I logged changed .ui_info such that I could log into the Crashplan server on the NAS.

this presented me with the standard Crashplan login/create new account.

I logged in – a minute or so later, the first client connected.

I guess the server was “stuck” waiting on the login before accepting connections

Anyone tried Cloudberry lab backup for Synology with backblaze B2? I know B2 over a terabyte is too expensive but wondering if anyone tried it. I only have 130 GB of data.

I have tried Backblaze B2, and my experience is that its not mature yet. At first glance it looked promising, I made a backup of my homes folder (130GB) after successful backup I decided to backup my public folder into same bucket. When setting up the backup it was no possible to create or see the remote folders anymore. After some googling I found that others had the same problem. https://forum.synology.com/enu/viewtopic.php?t=120289

Patters – any chance you can fix the latest 480_223 update to make it work again?

Agreed. Since the last CrashPlan update my client crashes after synchronizing. Then if I restart the client hangs on the welcome popup that has the version number. Both client and server are running 4.8. The Synology CrashPlan package is still running with no errors in the logs. The client has no errors in the logs either.

This is rather odd. Crashplan has been broken on my DS1815+ for more than 20 days. It’s been normal for it to be broken for a few days or even a week but I think this is the longest downtime I’ve had with this package. I sure hope Patters can get this resolved soon.

cannot install on ds214+ after upgrading to dsm6. Is crashplan not compatible with ds214+ anymore?

+1

Well, I stumbled across a nasty little quirk of Hyperback backing up to Amazon Drive yesterday…

I lost power yesterday, and even though my UPS kept everything up, if UPS support is enabled on the NAS, DSM puts the NAS into Safe Mode, stopping all services and unmounting the volumes.

When the power was restored, DSM started all services and mounted the volume. However, the backup I had running via Hyperback was in a “canceled” state. Starting it again started it a ground zero!

It had been running for 2 weeks and had a little over 50% complete of 3.4TB….all down the drain!

There is no option for resuming the backup, so it looks like that sux a big one. If you’ve got small backup sets, it’s not much of an issue. But hundreds of Gig? BIG ISSUE!!!

It seems that Hyperback is a little too limited and not robust enough for my needs, and Backblaze is too expensive, so sad to say, I’m going to have to tuff it out with CP until there’s a better solution.

It appears that you are correct – Hyperbackup tasks are not resumable, and only commit their changes at completion. The good news is that if a backup *does* get interrupted, it doesn’t affect any of the data from previous successful backups.

To minimize exposure to loss while I seeded my multi-terabyte Hyperback to Amazon Drive, I simply ran a number of backups one after the other, adding a new directory branch each time until eventually I had everything up there.

Well, I stumbled across a nasty little quirk of Hyperback backing up to Amazon Drive yesterday…

I lost power yesterday, and even though my UPS kept everything up, if UPS support is enabled on the NAS, DSM puts the NAS into Safe Mode, stopping all services and unmounting the volumes.

When the power was restored, DSM started all services and mounted the volume. However, the backup I had running via Hyperback was in a “canceled” state. Starting it again started it a ground zero!

It had been running for 2 weeks and had a little over 50% complete of 3.4TB….all down the drain!

There is no option for resuming the backup, so it looks like that sux a big one. If you’ve got small backup sets, it’s not much of an issue. But hundreds of Gig? BIG ISSUE!!!

It seems that Hyperback is a little too limited and not robust enough for my needs, and Backblaze is too expensive, so sad to say, I’m going to have to tuff it out with CP until there’s a better solution.

I discovered that “feature” during my initial testing, when I deliberately canceled a backup and found that the progress to that point got discarded. Accordingly, I started by selecting only a subset of the directories I wanted to include. ran a backup, added some more, ran another backup, etc, until eventually I had everything backed up. By doing so, I only stood to lose 10-12 hours’ worth of effort and bandwidth in the event of an interruption.

I also verified that what you lose is *only* the latest data that was being backed up by that task – the existing backups already in the cloud are not affected at all.

Why not use CloudSync ? It connects to Amazon Drive and encrypts…

1435813200480_323 has left my crashplan not able to start (with no indication in the log what’s going on).

Just a quick note re: Amazon Drive – it *does* have a file size limit of 10GB – just so you are aware. I have many .m2ts files that I backup to Crashplan (with no limitation) – but those can’t move up to Amazon Drive. Might not be a problem for most people – but thought I would mention it.

Good to know. Thank you, I’m in a similar situation.

Lovely! It won’t affect me but giid to know. I have now cancelled my Amazon drive service so after the 3 month free trial I won’t continue.

This may affect CloudSync, but it does not affect HyperBackup, which breaks things up into manageable chunks before it sends them to whatever storage media you have chosen. I have many files much larger than 10GB, and they’re all backing up to Amazon just fine via HyperBackup.

My HyperBackup to Amazon drive fails regularly with message can’t reach Amazon… Can’t figure out why.

Also without any changes to the backup set it takes 8 minutes to backup. May be this is normal but annoying still.

It’s an unattended background process – why does it matter that it takes eight minutes to back up even if there have been no changes. It may be checking for expired versions, or performing some other sort of housekeeping, but why care since it’s only impacting you if you’re sitting there watching it.

Because I only have 130GB of data for now and about to grow that rapidly over the next few months. If the backup window for 130GB of data with 0 changes is 8 min then what would 1TB or more look like with or without changes…

CP, as much as I hate the product, seems to do have a different approach.

Crash plan works differently and it can still take time.

I’ve got 550gb in a hyper backup and it does t take long to run when there is nothing new to add

Jon, have you tried to restore those >10GB files?

Few weeks ago I tested Hyper Backup and Amazon Drive with some 13GB avi-files. Backup went fine BUT restore didn’t work (I first deleted the local backup task and then tried to “Restore from existing repositories”). Tried several times, every time the restore failed after some minutes and I got these error messages in the log: “Exception occurred while restoring data.” followed by “Failed to run restore.”

So, I decided not to spend more time on Hyper Backup at the moment.

I now have a total of 1.7TB backed up to Amazon Drive via HyperBackup, and I only started using it three weeks ago – to back up that same amount of data via Crashplan would have taken about a year, I reckon. (I actually used CrashPlan’s “send me a disk drive” option to seed my backups onto their cloud servers, but that’s another story and I hear that they no longer offer that service anyway.)

Those 1.7 terabytes are broken up into several smaller HyperBackup jobs, each backing up a specific portion of my NAS. The largest – our Homes and a family Shared folder – holds almost 900GB, and last night’s backup, which backed up just over 1GB of new and changed files, took just over five minutes including version rotation.

Which suggests that the amount of time taken to back up only minor changes seems to be pretty much unaffected by the amount of data already backed up so you can rest easy there, Dimi.

Smashing :) good to know and thank you!

Jon, I seem to be having the opposite experience for upload times to Amazon & CP to what you’re seeing. Before I had to restart my backup to Amazon, it had completed about 1.2TB in a little more than 2 weeks. When I did my initial upload of 3.2TB to CP, it took about a month. The upload speeds I am seeing, as reported by DSM’s Resource monitor showed ~500-900KB/s for Amazon at various times of the day, & currently showing ~700-900KB/s. I am NOT being throttled at the ISP.

Jon, I seem to be having the opposite experience for upload times to Amazon & CP to what you’re seeing. Before I had to restart my backup to Amazon, it had completed about 1.2TB in a little more than 2 weeks. When I did my initial upload of 3.2TB to CP, it took about a month. The upload speeds I am seeing, as reported by DSM’s Resource monitor showed ~500-900KB/s for Amazon at various times of the day, & currently showing ~700-900KB/s. I am NOT being throttled at the ISP.

Jon, have you tried to restore those >10GB files?

Few weeks ago I tested Hyper Backup and Amazon Drive with some 13GB avi-files. Backup went fine BUT restore didn’t work (I first deleted the local backup task and then tried to “Restore from existing repositories”). Tried several times, every time the restore failed after some minutes and I got these error messages in the log: “Exception occurred while restoring data.” followed by “Failed to run restore.”

So, I decided not to spend more time on Hyper Backup at the moment.

Jon, have you tried to restore those >10GB files?

Few weeks ago I tested Hyper Backup and Amazon Drive with some 13GB avi-files. Backup went fine BUT restore didn’t work (I first deleted the local backup task and then tried to “Restore from existing repositories”). Tried several times, every time the restore failed after some minutes and I got these error messages in the log: “Exception occurred while restoring data.” followed by “Failed to run restore.”

So, I decided not to spend more time on Hyper Backup at the moment.

Yes – I’m in the middle of a large restore test right now, as it happens. 470GB restored so far, including one file over 200GB, with no errors, failures, or retries, and the restored files are bit-perfect duplicates of the originals.

I love my Synology, I love my Crashplan, and I love the support you guys at pcloadletter are giving …

… but this is getting old …

every other month we are stuck with a Crashplan upgrade. Painful ….

I see 2 options …

(1) I have an old windows machine (fairly low power, used it for media streaming) sitting around. I can install Crashplan on that machine (in ‘user’ mode), automap my sinology shares I want backup, configure the machine for autologon, and let crashplan do the job on that machine

(2) I forget Crashplan and move to a competitor, perhaps backblaze

without the good folks here, I would have moved away a long time ago. Can’t drop Synology, but I can drop Crashplan

any thoughts?

thx

Wolfgang

I just want to leave here a comment (after so many time of just getting help from all of you) about my experience with Crashplan (synology package) and why I will move away from it.

For the last 3 years I have been using my NAS has a backup solution for all my computers and used crashplan for that purpose (I payed for a familiar plan). When it worked it was almost perfect (damn you java) but as you all know, it gets broken a lot of times (damn you updates) and at some point I was tired of that. I really really appreciate all the work Patters has done but the time to move one has come.

I now use amazon cloud drive and hyper backup as the backup solution for my sinology. It just works out of the boy and that is only what it matters to me now. The price for cloud drive is decent and I don’t need to worry about anything else (I also use cloud sync for other purposes). I don’t have all my backups already in amazon cloud but it’s going pretty fast (faster than uploading to crashplan cloud).

For all my other computers (i was thinking keeping using crashplan as a local backup option but I gave up on that: java versions and synology package being broken after every single update) I installed Arq Backup software. It costs 50$ but in my opinion it’s worth the money. It backups to a lot of cloud services (amazon drive is included for example) and to any other local device (folder, NAS, etc). Basically it’s what I always wanted crashplan to be but without all the problems. The upload is really fast and the interface is very intuitive. Basically it just works.

So I canceled my crashplan account (I still have 3 more years with them. No refund possible) and it’s only being used until I have all my backups saved with the new tools.

while waiting for a fix, trying hyperbackup to amazon drive. was backing up extreeeeeeeeemely slowly. after a week, i tried a version that did not have compression or encryption turned on. amazingly, backing up even more slowly. i thought crashplan was bad at 4MB, but this is < 1MB. just terrible.

Dunno what to tell you – my nightly Hyperbackups to Amazon Drive are saturating my 20Mbps uplink, and my current restore test is chugging along at 50Mbps down.

Maybe Hyperbackup is sensitive to CPU and memory – my Syno is a DS1815+ (2.4GHz quad-core Intel Atom) with 6GB of RAM.

Further to this, I am currently testing multiple concurrent restores. It appears that Hyperbackup will only run five at a time – the rest are “waiting” – but I’m more than happy with the throughput. It’s spiky rather than a constant flood, but I’m seeing times where it is saturating my 200Mbps downlink, and an average of a little over 120Mbps.

DJ214se – ARM based

Today CP on the NAS tried to download an update to 4.8. I have set CP to prevent the upgrade (as outlined in earlier post by Per), and that works, but it tries to download and update every hours, logging each attempt. It’s unlikely to stop these attempts, so I might need to move CP off the NAS and use shared drive approach.

What’s the harm in letting in try every hour? If it’s not impacting performance, and only logging one or two lines, I’d let ‘er try it ’til the cows come home.

Has anyone tried to figure out how CP is checking for updates? I couldn’t find any cronjobs in the usual places that would be doing it.

What’s the harm in letting it check for updates? As long as it’s not affecting performance and spamming the logs, it shouldn’t matter.

Has anyone tried to figure out how CP is checking for updates? I did a cursory look for a cronjob/crontab and didn’t see one in the usual places.

Has anyone been able to get 480_223 work? If not, how are you still running with the previous version that Patters released? I’ve been down for about a week now and would really like to get this resolved.

No update from patters yet.

Uninstall CP

Install CP from patters, don’t star the package

Apply the block update fix mentioned here chmod 444 update, etc

Start CP

Wait for sync

Wait for patters to update package

Thanks Dimi – I figured that people had to be blocking the update or there would have been a ton more comments saying they were down as well.

DS213+ (QorIQ-based) user here. Thanks, Patters, for all your efforts on our behalf with CrashPlan on Synology. I really appreciated it. It was a fun, if sometimes bumpy ride.

I’ve just signed up for an Amazon Drive trial and am setting it up with Hyper Backup. Could anybody weigh in on the advantages, disadvantages, usability impact of selecting the client-side encryption option in the backup wizard?

All,

The last bunch of times mine auto-upgraded I just did these quick steps and was back and working within 5 mins!

1. Stop Crashplan

2. ssh in

3. If you don’t have cpio in /bin do this:

cd /bin

ln -s /var/packages/CrashPlan/target/bin/cpio

3. cd /var/packages/CrashPlan/target/upgrade/LATESTUPGRADEDIR

4. cat ./upgrade.cpi | gzip -d -c – – | cpio -iv

5. Restart CP and you should be golden!

I’m using ssh keys to ssh into my nas as the root user, if you’re admin or some other user you’d want to do step 4 as:

4. sudo cat ./upgrade.cpi | gzip -d -c – – | cpio -iv

The thing that takes the most time is rm’ing the 43579345789435789 upgrade dirs that a created during the failing upgrade process. After that it’s a super quick fix!

Good luck!

Ignore my screwed up step numbering.. haha

I get this error?