Many storage vendors now offer hardware Volume ShadowCopy Service providers for their storage arrays which allow the SAN itself to carry out the snapshot, rather than the underlying OS. These providers are Exchange- and SQL-aware so they will quiesce the transaction logs just before the snapshot.

The big win here is off-host backup – the target server asks the SAN to snap the data volume then carries on as normal. The backup media server meanwhile will mount this SAN snapshot and back it up directly from the SAN. In this way you can backup SQL and Exchange environments in the middle of the day without any performance degradation (assuming of course that you have the IOPS headroom on the SAN). Symantec Backup Exec 12.5 and later supports this technology but it must be purchased as an option – Advanced Disk-based Backup Option or ADBO.

However, to off-host backup Exchange or SQL you will need to have both the databases/mail stores and the transaction logs on the same SAN LUN. This flies in the face of the old wisdom of segregating logs onto RAID1 spindles, but it’s important to realise that a modern SAN makes this perfectly viable. The EqualLogic PS4000XV in my environment for instance has a write latency of <1.0ms in RAID50. Microsoft used to recommend keeping SQL logs on disk with a write latency sub 10ms (now they say 1-5ms).

Moving all of the transaction logs on a crowded SQL server is tricky for several reasons:

- the Transact-SQL database alter command requires you to know the database’s logical filenames. On an SQL 2005 server, these are largely predictable but it gets complicated when some of the DBs have migrated from SQL 2000 and if some of them were restored from backups of databases with different names (dev or test versions which then went into production for example).

- the system databases are likely to be on C: and if you want to grab all DBs in one backup job these will need moving too (TempDB is usually ignored by most backup software and can stay where it is).

- though some guides on the Web suggest detaching and re-attaching the DBs – this is a surefire way to end up with a total disaster since the re-attached DBs will have new GUIDs which will wreck SharePoint amongst others.

Backing up then restoring your databases specifying new file paths is one method but the danger is that you would need to isolate them so that no changes occurred during that time window (which could be a long time).

Moving user databases

The best solution for these is 99% careful preparation work – to build a long list of T-SQL database alter commands to change the SQL file references, and a batch script to move the actual files to the database drive. You can also use this as an opportunity to clean up any badly named files, and move ones that are in the wrong place.

It is highly recommended that if you haven’t already done so, you should set the following registry values on the SQL server which will guard against future inconsistencies. If they already exist, check they’re still valid:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Microsoft SQL Server\MSSQL.1\MSSQLServer\DefaultData

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Microsoft SQL Server\MSSQL.1\MSSQLServer\DefaultLog

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Microsoft SQL Server\MSSQL.1\MSSQLServer\BackupDirectory

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Microsoft SQL Server\MSSQL.1\MSSQLServer\FullTextDefaultPath

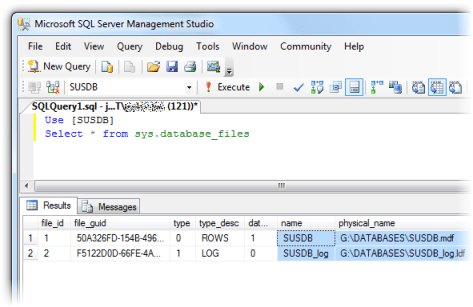

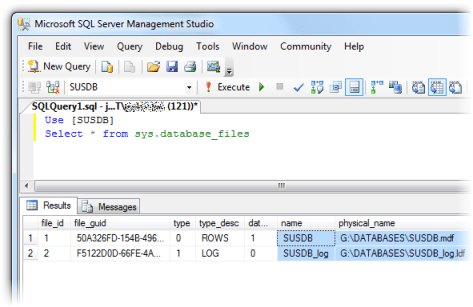

For each database you need to run the following Transact-SQL:

Use [DBname]

Select * from sys.database_files

This will return all the files in the filegroup including full text catalogs (if they exist) together with their logical names (name column):

In this example the transaction log is already in the desired location, but it if was in say C:\TRANSACTIONS LOGS we would need to write:

alter database [SUSDB] modify file (name = SUSDB_log, filename = 'G:\DATABASES\SUSDB_log.ldf')

You would then add this to your file move batch script:

move /y "C:\TRANSACTION LOGS\SUSDB_log.LDF" G:\DATABASES\SUSDB_log.ldf

My method was to run a full SQL backup to commit the transaction logs (less data to move), run the alter database commands all at once (which don’t take effect until the SQL Server service next starts), stop the SQL Server service, run the file move batch script, check for any errors, then start the SQL Server service again. Once it’s up, you can try to expand each database in SQL Management Studio. Any databases with damaged file paths will not expand. Refer back to your command prompt window to try and figure out what went wrong (usually a typo).

In this way you should be able to move all of the logs with a bare minimum of downtime – several minutes in my case.

Moving system databases

Moving system databases is fairly straightforward, but it will require a little more downtime. Again, I’d probably leave TempDB where it is to separate its I/O from the rest as it can be high and we don’t need to back it up. If you do want to move it, the procedure is the same as any non-system database. The rest though are special cases.

Run the following and note the current file locations which will probably be in C:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\DATA

use model

select * from sys.database_files

use msdb

select * from sys.database_files

use master

select * from sys.database_files

Now close SQL Management Studio and run the following from a Command Prompt (the parameters are case sensitive!):

net stop mssqlserver

net start mssqlserver /c /m /T3608

Open SQL Management Studio again but read this carefully. With these startup parameters, SQL Server will only allow a single connection. The default behaviour of the GUI is to open the Object Explorer window once you connect, which counts as a connection. You need to click on the Disconnect button, and close the Object Explorer child window. You should then be able to open a New Query.

If you closed the Object Explorer without disconnecting you’ll get the error “Server is in single user mode. Only one administrator can connect at this time.” and you’ll need to stop and start the service again, as above, and repeat. Next:

sp_detach_db 'model'

sp_detach_db 'msdb'

Move the files to the new location (logs and databases remember), then run the following taking care to substitute in your new file paths:

sp_attach_db 'model','G:\DATABASES\model.mdf','G:\DATABASES\modellog.ldf'

sp_attach_db 'msdb','G:\DATABASES\msdbdata.mdf','G:\DATABASES\msdblog.ldf'

Stop the SQL Server service. Start it again normally (no parameters) and check you can expand model and msdb in Management Studio.

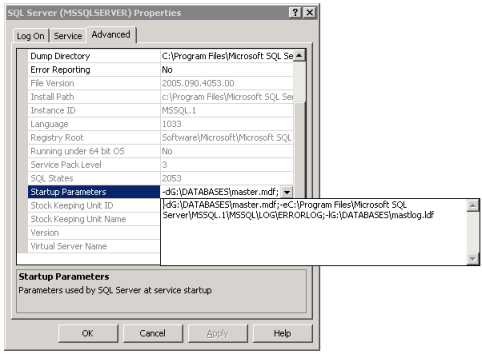

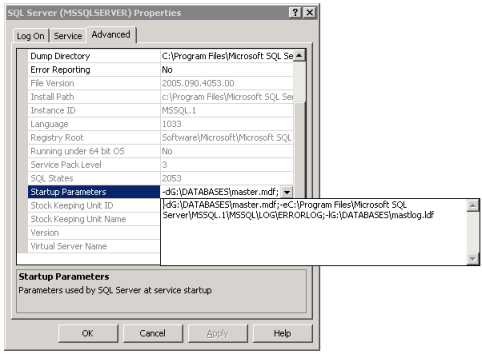

We just have the master database left to move. Stop the SQL Server service again. Move master’s log and database files to the new location. On the SQL server machine’s console, open Start Menu > Programs > Microsoft SQL Server 2005 > Configuration Tools > SQL Server Configuration Manager.

In the category SQL 2005 Services, select SQL Server (MSSQLSERVER) and look at the Properties. Select the Advanced tab. Select Startup Parameters and pull down the dropdown next to it.

Change the value from the defaults of:

-dC:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\DATA\master.mdf;-eC:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\LOG\ERRORLOG;-lC:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\DATA\mastlog.ldf

to your new file paths (don’t change the error log path by accident):

-dG:\DATABASES\master.mdf;-eC:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\LOG\ERRORLOG;-lG:\DATABASES\mastlog.ldf

Finally, start the SQL Server Service. Done!

The trouble with full-text catalogs

If you rely on the EqualLogic Auto-Snaphot Manager to tell you whether your databases now support SAN snapshots you could be in for a surprise when you backup using ADBO in Backup Exec:

V-79-57344-34086 – ADBO: Offhost backup initialization failure on: “myhostname.domain.com”.

Snapshot provider error (0xE0008526): Backup Exec could not locate a Microsoft Volume Shadow Copy Services (VSS) software or hardware snapshot provider for this job. Select a valid VSS snapshot provider for the target computer.

Check the Windows Event Viewer for details.

This is an awful error message because it doesn’t really describe the problem (and you won’t find anything meaningful in the Event Viewer). It almost looks like a registration failure of the Hardware VSS Provider, which is misleading, and caused me about 2 hours of out-of-hours work reinstalling it, taking the server offline, etc. to satisfy Symantec Support. However, run a job with the same selection list but using normal AOFO (Advanced Open File Backup) and you get:

AOFO: Initialization failure on: “myhostname.domain.com”. Advanced Open File Option used: Microsoft Volume Shadow Copy Service (VSS).

V-79-10000-11219 – VSS Snapshot error. The volume or snapped volume may not be available or may not exist. Check the configuration of the snapshot provider, and then run the job again.

The following volumes are dependent on resource: “C:” “D:” “G:”.

Much clearer – there’s a dependency on the D: drive being detected, the drive I migrated from. By chance I changed the backup selection list realised that some databases backed up while others didn’t. The cause turned out to be a full text catalog.

The EqualLogic ASM only checks the database and log files, not full-text catalogs. Moving these seems to be pretty difficult. Microsoft have an MSDN document describing database moves (see section on catalogs further down the page). I have tried following this process to the letter, and when that didn’t work I tried various permutations of stopping the SQL Server service, the SQL FullText Search service (which seems to autorestart), the SQL Server Agent service, copying the files, not copying the files (expecting SQL to move them) etc. No combination seemed to work for me. What I found was that, while it is easy enough to move the catalog path like so:

alter database [ExampleDB] modify file (name = [sysft_ExampleDB], filename = 'G:\DATABASES\FTData\ExampleDB')

there is some meta data that does not get updated and the ADBO backup will still fail when the VSS provider checks all the file dependencies.

sys.database_files shows the correct paths. Eventually I discovered that

Select * from sys.fulltext_catalogs

still showed the old location for the catalogs. The only way I could find to get this to update was to rebuild the full-text catalog in SQL Management Studio – expand the database > Storage > Full Text Catalogs > right-click > Rebuild.

For me this was acceptable and quick, but I imagine some infrastructures might not be so tolerant of a rebuild.